Using Amazon ECS Exec to access ECS (Fargate) containers in private subnet

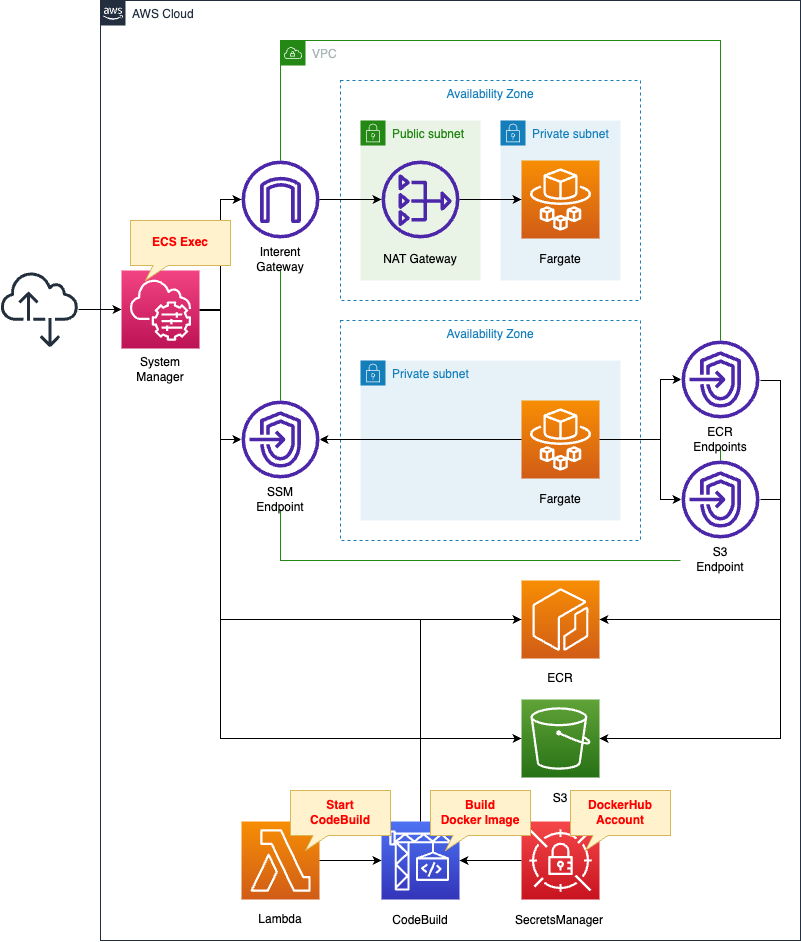

Amazon ECS Exec allows access to running ECS containers.

With Amazon ECS Exec, you can directly interact with containers without needing to first interact with the host container operating system, open inbound ports, or manage SSH keys. You can use ECS Exec to run commands in or get a shell to a container running on an Amazon EC2 instance or on AWS Fargate. This makes it easier to collect diagnostic information and quickly troubleshoot errors.

Using Amazon ECS Exec for debugging

This page will review how to access an ECS (Fargate) container in a private subnet.

Environment

Define ECS service tasks of type Fargate in each of the two private subnets.

Access each container using ECS Exec.

Each container is accessed via the following path

- NAT Gateway

- VPC Endpoints

The ECR repository (S3) is accessed via the same route.

Build the Docker image we will be using with CodeBuild.

When building, we will refer to the DockerHub account information stored in the Secrets Manager.

Create a Lambda function associated with the CloudFormation custom resource.

The function’s function is to initiate a build with CodeBuild.

CloudFormation template files

The above configuration is built with CloudFormation.

The CloudFormation templates are placed at the following URL

https://github.com/awstut-an-r/awstut-fa/tree/main/127

Explanation of key points of template files

Network configuration for using ECS Exec

NAT Gateway

Resources:

IGW:

Type: AWS::EC2::InternetGateway

IGWAttachment:

Type: AWS::EC2::VPCGatewayAttachment

Properties:

VpcId: !Ref VPC

InternetGatewayId: !Ref IGW

EIP:

Type: AWS::EC2::EIP

Properties:

Domain: vpc

NATGateway:

Type: AWS::EC2::NatGateway

Properties:

AllocationId: !GetAtt EIP.AllocationId

SubnetId: !Ref PublicSubnet

PublicSubnet:

Type: AWS::EC2::Subnet

Properties:

CidrBlock: !Ref CidrIp1

VpcId: !Ref VPC

AvailabilityZone: !Sub "${AWS::Region}${AvailabilityZone1}"

ContainerSubnet1:

Type: AWS::EC2::Subnet

Properties:

CidrBlock: !Ref CidrIp2

VpcId: !Ref VPC

AvailabilityZone: !Sub "${AWS::Region}${AvailabilityZone1}"

PublicRouteTable:

Type: AWS::EC2::RouteTable

Properties:

VpcId: !Ref VPC

ContainerRouteTable1:

Type: AWS::EC2::RouteTable

Properties:

VpcId: !Ref VPC

RouteToInternet:

Type: AWS::EC2::Route

Properties:

RouteTableId: !Ref PublicRouteTable

DestinationCidrBlock: 0.0.0.0/0

GatewayId: !Ref IGW

RouteToNATGateway:

Type: AWS::EC2::Route

Properties:

RouteTableId: !Ref ContainerRouteTable1

DestinationCidrBlock: 0.0.0.0/0

NatGatewayId: !Ref NATGateway

PublicRouteTableAssociation:

Type: AWS::EC2::SubnetRouteTableAssociation

Properties:

SubnetId: !Ref PublicSubnet

RouteTableId: !Ref PublicRouteTable

ContainerRouteTableAssociation1:

Type: AWS::EC2::SubnetRouteTableAssociation

Properties:

SubnetId: !Ref ContainerSubnet1

RouteTableId: !Ref ContainerRouteTable1

Code language: YAML (yaml)Create a NAT gateway on the public subnet.

Configure the route table as follows so that instances on the private subnet can communicate with ECS Exec (System Manager) via the NAT gateway.

- Route table for private subnet: set default route for NAT gateway

- Route table for public subnet: set default route for Internet Gateway

VPC Endpoints

Resources:

ContainerRouteTable2:

Type: AWS::EC2::RouteTable

Properties:

VpcId: !Ref VPC

ContainerRouteTableAssociation2:

Type: AWS::EC2::SubnetRouteTableAssociation

Properties:

SubnetId: !Ref ContainerSubnet2

RouteTableId: !Ref ContainerRouteTable2

ContainerSecurityGroup:

Type: AWS::EC2::SecurityGroup

Properties:

GroupName: !Sub "${Prefix}-ContainerSecurityGroup"

GroupDescription: Deny all.

VpcId: !Ref VPC

EndpointSecurityGroup:

Type: AWS::EC2::SecurityGroup

Properties:

GroupName: !Sub "${Prefix}-EndpointSecurityGroup"

GroupDescription: Allow HTTPS from ContainerSecurityGroup.

VpcId: !Ref VPC

SecurityGroupIngress:

- IpProtocol: tcp

FromPort: !Ref HTTPSPort

ToPort: !Ref HTTPSPort

SourceSecurityGroupId: !Ref ContainerSecurityGroup

S3Endpoint:

Type: AWS::EC2::VPCEndpoint

Properties:

RouteTableIds:

- !Ref ContainerRouteTable2

ServiceName: !Sub "com.amazonaws.${AWS::Region}.s3"

VpcId: !Ref VPC

SSMMessagesEndpoint:

Type: AWS::EC2::VPCEndpoint

Properties:

PrivateDnsEnabled: true

SecurityGroupIds:

- !Ref EndpointSecurityGroup

ServiceName: !Sub "com.amazonaws.${AWS::Region}.ssmmessages"

SubnetIds:

- !Ref ContainerSubnet2

VpcEndpointType: Interface

VpcId: !Ref VPC

ECRDkrEndpoint:

Type: AWS::EC2::VPCEndpoint

Properties:

PrivateDnsEnabled: true

SecurityGroupIds:

- !Ref EndpointSecurityGroup

ServiceName: !Sub "com.amazonaws.${AWS::Region}.ecr.dkr"

SubnetIds:

- !Ref ContainerSubnet2

VpcEndpointType: Interface

VpcId: !Ref VPC

ECRApiEndpoint:

Type: AWS::EC2::VPCEndpoint

Properties:

PrivateDnsEnabled: true

SecurityGroupIds:

- !Ref EndpointSecurityGroup

ServiceName: !Sub "com.amazonaws.${AWS::Region}.ecr.api"

SubnetIds:

- !Ref ContainerSubnet2

VpcEndpointType: Interface

VpcId: !Ref VPC

Code language: YAML (yaml)Create a total of four VPC endpoints for two uses.

- To access images using ECS Exec

- To pull images from the ECR repository

In addition, the VPC endpoints for ECS Exec are mentioned as follows.

If you use the ECS Exec feature, you need to create the interface VPC endpoints for Systems Manager Session Manager.

Create the Systems Manager Session Manager VPC endpoints when using the ECS Exec feature

Define VPC endpoints for ssmmessages according to the above.

Fargate

This page focuses on how to use ECS Exec to access the Fargate container in a private subnet.

For information on how to deploy Fargate on a private subnet, please see the following page.

IAM Role for Tasks

Resources:

TaskRole:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Principal:

Service:

- ecs-tasks.amazonaws.com

Action:

- sts:AssumeRole

Policies:

- PolicyName: ECSExecPolicy

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- ssmmessages:CreateControlChannel

- ssmmessages:CreateDataChannel

- ssmmessages:OpenControlChannel

- ssmmessages:OpenDataChannel

Resource: "*"

Code language: YAML (yaml)There are requirements regarding IAM role for tasks in order to use ECS Exec.

The ECS Exec feature requires a task IAM role to grant containers the permissions needed for communication between the managed SSM agent (execute-command agent) and the SSM service.

Using ECS Exec

Referring to the official page of the citation, four actions are allowed.

Task Definition

Resources:

TaskDefinition:

Type: AWS::ECS::TaskDefinition

Properties:

Cpu: !Ref TaskCpu

ContainerDefinitions:

- Name: !Sub "${Prefix}-amazon-linux"

Command:

- "/bin/sh"

- "-c"

- "sleep 3600"

Essential: true

Image: !Sub "${AWS::AccountId}.dkr.ecr.${AWS::Region}.amazonaws.com/${ECRRepositoryName}:latest"

LinuxParameters:

InitProcessEnabled: true

LogConfiguration:

LogDriver: awslogs

Options:

awslogs-group: !Ref LogGroup

awslogs-region: !Ref AWS::Region

awslogs-stream-prefix: !Sub "${Prefix}-amazon-linux"

ExecutionRoleArn: !Ref FargateTaskExecutionRole

Memory: !Ref TaskMemory

NetworkMode: awsvpc

RequiresCompatibilities:

- FARGATE

RuntimePlatform:

CpuArchitecture: ARM64

OperatingSystemFamily: LINUX

TaskRoleArn: !Ref TaskRole

Code language: YAML (yaml)This page was also set up with reference to the following page.

https://docs.aws.amazon.com/AmazonECS/latest/userguide/ecs-exec.html#ecs-exec-enabling-and-using

The key point is the InitProcessEnabled property within the LinuxParameters property.

Setting “true” to this property has the effect cited below.

If you set the task definition parameter initProcessEnabled to true, this starts the init process inside the container, which removes any zombie SSM agent child processes found.

Using ECS Exec

Service

Resources:

Service1:

Type: AWS::ECS::Service

Properties:

Cluster: !Ref Cluster

LaunchType: FARGATE

DesiredCount: 1

EnableExecuteCommand: true

TaskDefinition: !Ref TaskDefinition

ServiceName: !Sub "${Prefix}-service1"

NetworkConfiguration:

AwsvpcConfiguration:

SecurityGroups:

- !Ref ContainerSecurityGroup

Subnets:

- !Ref ContainerSubnet1

Service2:

Type: AWS::ECS::Service

Properties:

Cluster: !Ref Cluster

LaunchType: FARGATE

DesiredCount: 1

EnableExecuteCommand: true

TaskDefinition: !Ref TaskDefinition

ServiceName: !Sub "${Prefix}-service2"

NetworkConfiguration:

AwsvpcConfiguration:

SecurityGroups:

- !Ref ContainerSecurityGroup

Subnets:

- !Ref ContainerSubnet2

Code language: YAML (yaml)Two services are defined.

The configuration itself is exactly the same, but the destination subnet is different.

They will communicate via NAT gateways and VPC endpoints, respectively.

The key point is the EnableExecuteCommand property.

Specifying “true” to this property will enable ECS Exec for services and tasks.

(Reference) Automatically push test images to ECR using Cloudformation custom resources and CodeBuild

Resources:

CodeBuildProject:

Type: AWS::CodeBuild::Project

Properties:

Artifacts:

Type: NO_ARTIFACTS

Cache:

Type: NO_CACHE

Environment:

ComputeType: !Ref ProjectEnvironmentComputeType

EnvironmentVariables:

- Name: DOCKERHUB_PASSWORD

Type: SECRETS_MANAGER

Value: !Sub "${Secret}:password"

- Name: DOCKERHUB_USERNAME

Type: SECRETS_MANAGER

Value: !Sub "${Secret}:username"

Image: !Ref ProjectEnvironmentImage

ImagePullCredentialsType: CODEBUILD

Type: !Ref ProjectEnvironmentType

PrivilegedMode: true

LogsConfig:

CloudWatchLogs:

Status: DISABLED

S3Logs:

Status: DISABLED

Name: !Ref Prefix

ServiceRole: !GetAtt CodeBuildRole.Arn

Source:

Type: NO_SOURCE

BuildSpec: !Sub |

version: 0.2

phases:

pre_build:

commands:

- echo Logging in to Amazon ECR...

- aws --version

- aws ecr get-login-password --region ${AWS::Region} | docker login --username AWS --password-stdin ${AWS::AccountId}.dkr.ecr.${AWS::Region}.amazonaws.com

- REPOSITORY_URI=${AWS::AccountId}.dkr.ecr.${AWS::Region}.amazonaws.com/${ECRRepositoryName}

- COMMIT_HASH=$(echo $CODEBUILD_RESOLVED_SOURCE_VERSION | cut -c 1-7)

- IMAGE_TAG=${!COMMIT_HASH:=latest}

- echo Logging in to Docker Hub...

- echo $DOCKERHUB_PASSWORD | docker login -u $DOCKERHUB_USERNAME --password-stdin

- |

cat << EOF > Dockerfile

FROM amazonlinux:latest

EXPOSE 80

EOF

build:

commands:

- echo Build started on `date`

- echo Building the Docker image...

- docker build -t $REPOSITORY_URI:latest .

- docker tag $REPOSITORY_URI:latest $REPOSITORY_URI:$IMAGE_TAG

post_build:

commands:

- echo Build completed on `date`

- echo Pushing the Docker images...

- docker push $REPOSITORY_URI:latest

- docker push $REPOSITORY_URI:$IMAGE_TAG

Visibility: PRIVATE

Code language: YAML (yaml)After building the image using CodeBuild, prepare the container image in the ECR repository.

In this case, we will use the latest version of Amazon Linux as a base.

For details, please refer to the following page.

Architecting

Use CloudFormation to build this environment and check its actual behavior.

Create CloudFormation stacks and check the resources in the stacks

Create CloudFormation stacks.

For information on how to create stacks and check each stack, please see the following page.

After reviewing the resources in each stack, information on the main resources created in this case is as follows

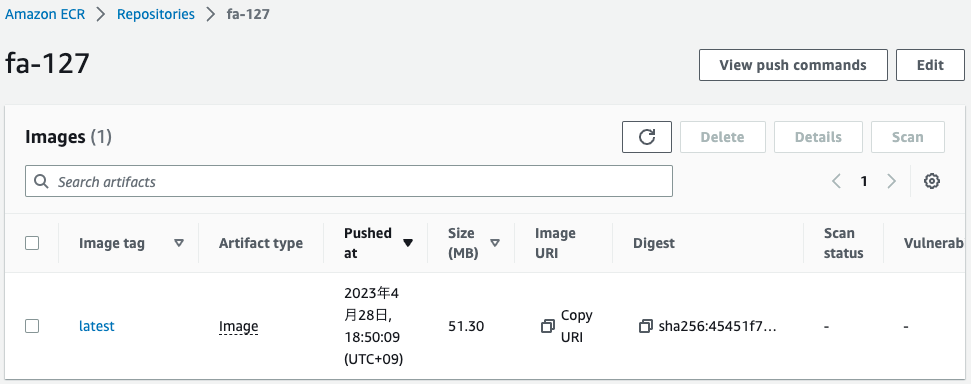

- ECR repository: fa-127

- ECS cluster: fa-127

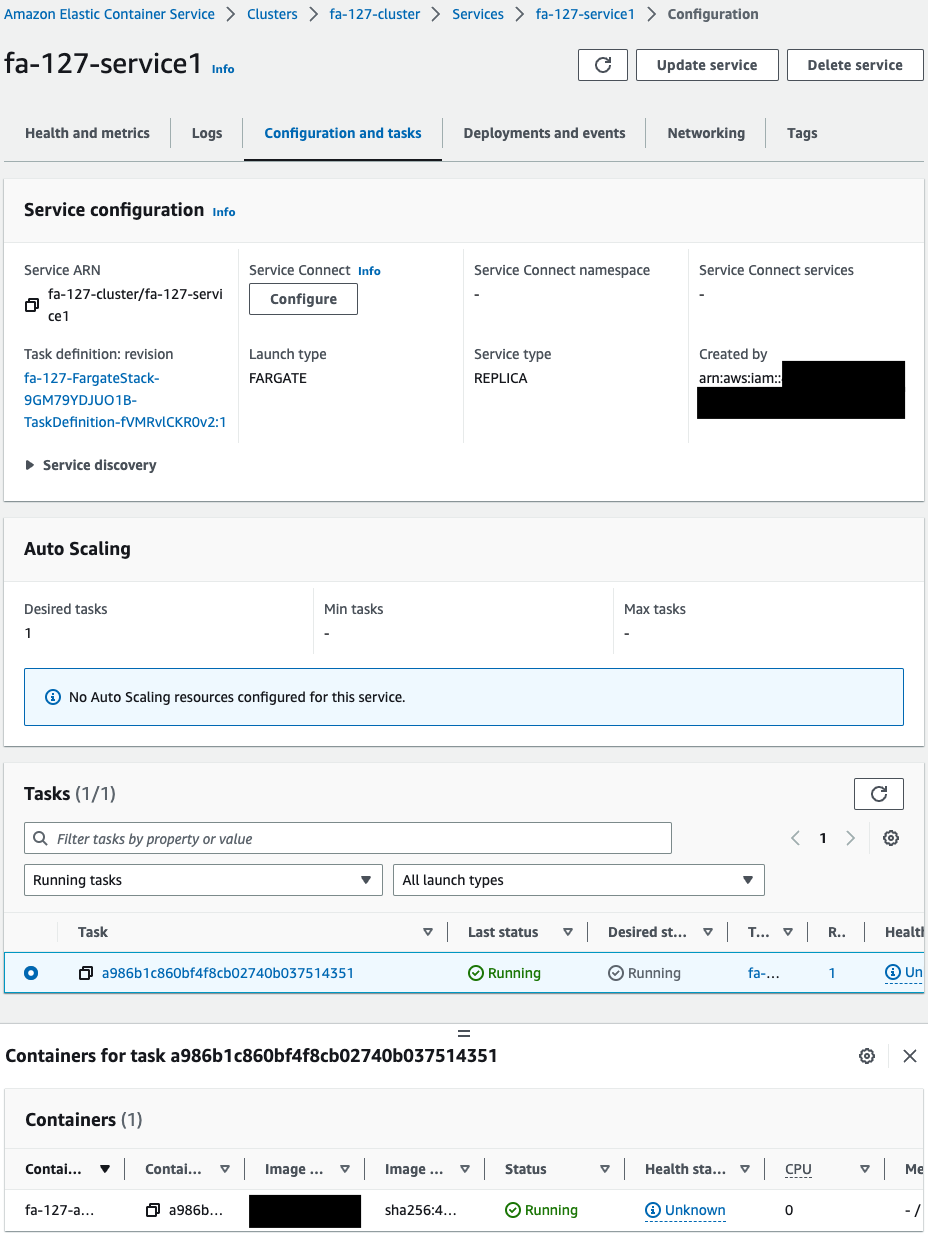

- ECS service 1: fa-127-service1

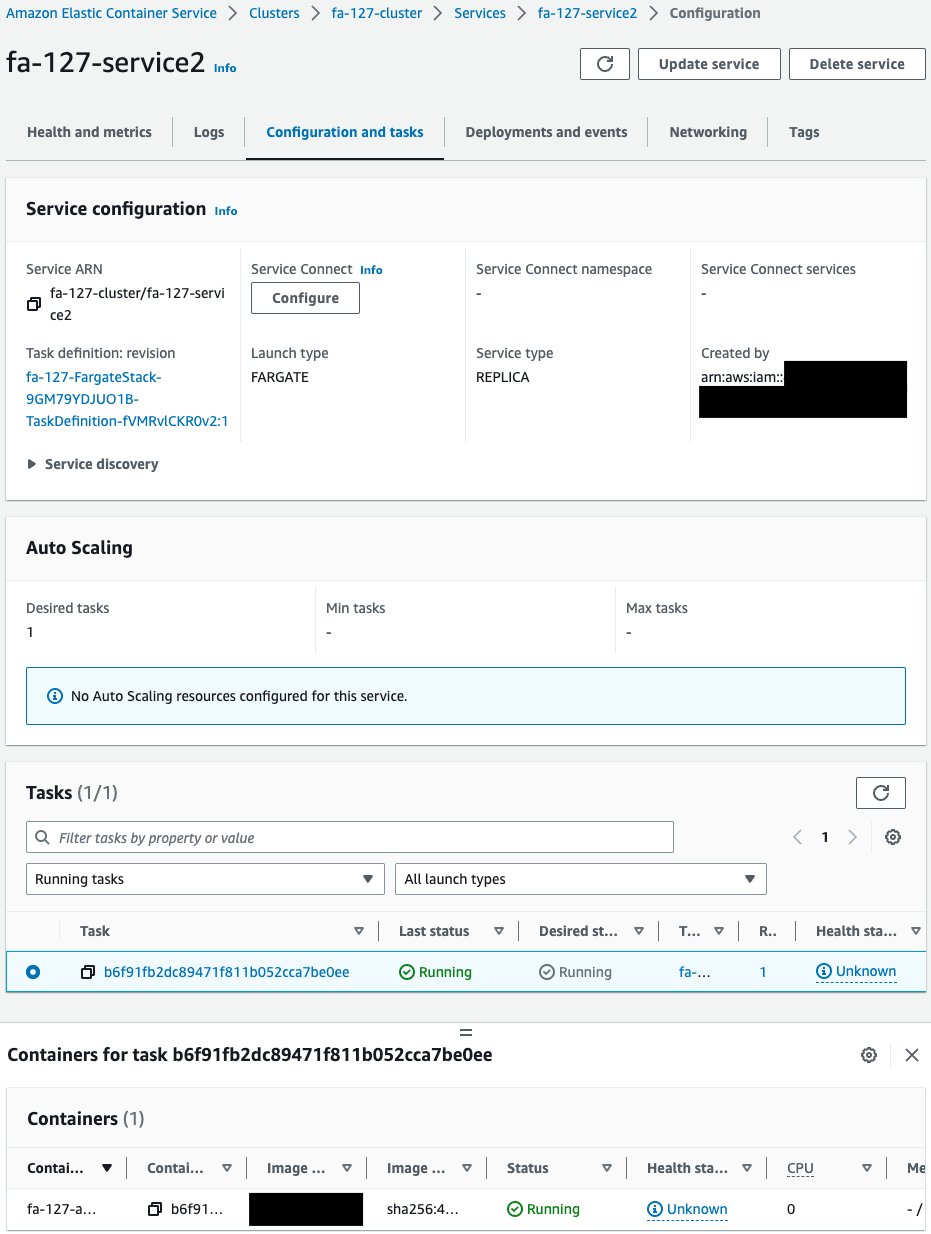

- ECS service 2: fa-127-service2

Check each resource from the AWS Management Console.

Check the ECR repository.

The image has been successfully pushed.

This means that the start of CodeBuild was triggered by the Lambda function associated with the CloudFormation custom resource.

This means that after the image was built, it was automatically pushed to the ECR.

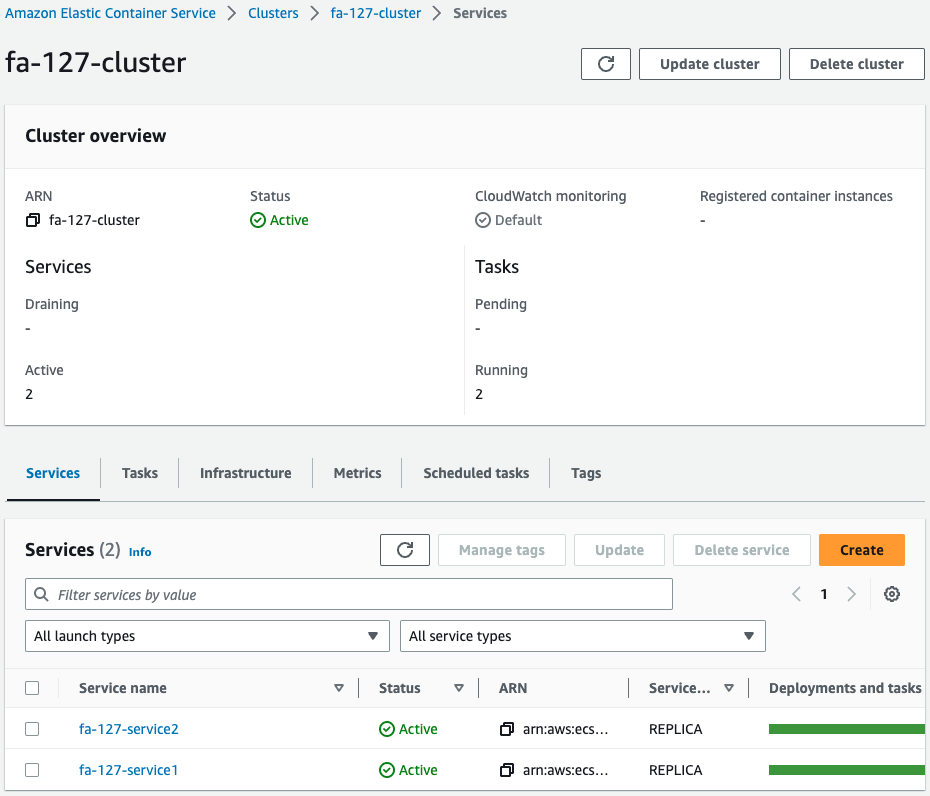

Check the ECS cluster.

You can see that there are two services running in the cluster.

Check the services for each.

One task is created for each service, and one container is started for that task.

Organize information.

- Service 1

- Task ID: a986b1c860bf4f8cb02740b037514351

- Container name: fa-127-amazon-linux

- Service 2

- Task ID: b6f91fb2dc89471f811b052cca7be0ee

- Container name: fa-127-amazon-linux

Operation Check

Access to containers via NAT gateway

Now that you are ready, use ECS Exec to access the Service 1 container.

Access is through the AWS CLI.

% aws ecs execute-command \

--cluster fa-127-cluster \

--task a986b1c860bf4f8cb02740b037514351 \

--container fa-127-amazon-linux \

--interactive --command "/bin/sh"

...

sh-5.2#

Code language: Bash (bash)We were able to access the container for service 1.

In this way, the container can also be accessed via the NAT gateway, using ECS Exec.

Access containers via VPC endpoints

Similarly, access the Service 2 container.

% aws ecs execute-command \

--cluster fa-127-cluster \

--task b6f91fb2dc89471f811b052cca7be0ee \

--container fa-127-amazon-linux \

--interactive \

--command "/bin/sh"

...

sh-5.2#

Code language: Bash (bash)We were also able to access the Service 2 container.

In this way, the container can also be accessed via the VPC endpoint, using ECS Exec.

Summary

Find out how to access ECS (Fargate) containers in private subnets.

Containers in private subnets can be accessed via NAT gateways and VPC endpoints.