Creating Lambda with CloudFormation

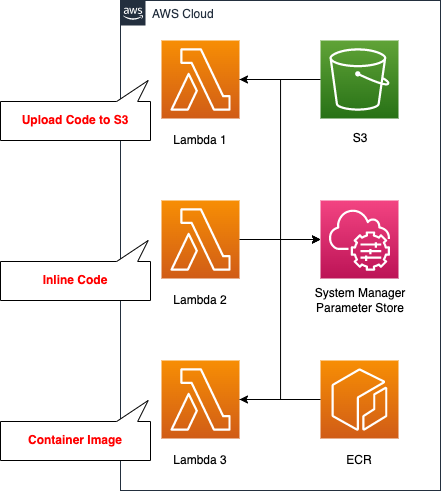

When creating a Lambda with CloudFormation, there are three main patterns as follows.

- Uploading the code to an S3 bucket

- Writing the code inline

- Preparing a container image

In this article, we will create a Lambda with the same content using these three patterns, and check the flow.

Environment

We will create a Lambda function for each pattern, but the content to be executed is the same. In this case, we will access the SSM Parameter Store and retrieve the parameters stored in it. We will also use Python 3.8 as the runtime environment.

CloudFormation template files

We will build the above configuration using CloudFormation. We have placed the CloudFormation template at the following URL.

https://github.com/awstut-an-r/awstut-fa/tree/main/020

Explanation of key points of template file

We will cover the key points of each template file to configure this architecture.

Create S3 bucket to upload Zip file with compressed code

Create an S3 bucket to upload the code for Lambda function 1.

Resources:

S3Bucket:

Type: AWS::S3::Bucket

Properties:

BucketName: !Sub "${Prefix}-bucket"

AccessControl: Private

Code language: YAML (yaml)No special settings are required.

Create ECR repository to push container image

Next, create an ECR repository to push the image for Lambda3.

Resources:

ECRRepository:

Type: AWS::ECR::Repository

Properties:

RepositoryName: !Sub ${Prefix}-repository

Code language: YAML (yaml)No special settings are required here either.

Register test parameters in SSM Parameter Store

For validation purposes, we will define an SSM Parameter Store that will be accessed by three Lambda functions.

Resources:

SSMParameter:

Type: AWS::SSM::Parameter

Properties:

Name: !Ref Name

Type: String

Value: !Ref Value

Code language: YAML (yaml)No special settings are required here either. Define a single parameter of type string.

IAM role for Lambda functions

Define the IAM Role for the Lambda function. The two main points are that you need to satisfy two permissions: one to run yourself, and one to access SSM.

Resources:

LambdaRole:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action: sts:AssumeRole

Principal:

Service:

- lambda.amazonaws.com

Policies:

- PolicyName: GetSSMParameter

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- ssm:GetParameter

Resource:

- !Sub "arn:aws:ssm:${AWS::Region}:${AWS::AccountId}:parameter/${SSMParameter}"

ManagedPolicyArns:

- arn:aws:iam::aws:policy/service-role/AWSLambdaBasicExecutionRole

Code language: YAML (yaml)The first point is that we need to grant the necessary permissions to execute the Lambda function. In this case, we will use the AWS managed policy AWSLambdaBasicExecutionRole to grant them. This policy includes the following permissions

- logs:CreateLogGroup

- logs:CreateLogStream

- logs:PutLogEvents

This permission is required to save logs to CloudWatch Logs.

The second point is the permissions related to the SSM Parameter Store. In this case, we will create a function that retrieves parameters stored in the SSM Parameter Store, since we are verifying access to AWS resources from Lambda. Specify the aforementioned parameters in the Resource property, and specify ssm:GetParameter in the Action property.

Create Lambda function from Zip file uploaded to S3 bucket

Check out Lambda function 1; upload the code to an S3 bucket and see how to define the function.

Resources:

Function1:

Type: AWS::Lambda::Function

Properties:

Code:

S3Bucket: !Ref S3Bucket

S3Key: !Ref S3Key

Environment:

Variables:

ssm_parameter_name: !Ref SSMParameter

region_name: !Ref AWS::Region

FunctionName: !Sub ${Prefix}-function1

Handler: !Ref Handler

MemorySize: !Ref MemorySize

PackageType: Zip

Runtime: !Ref Runtime

Role: !Ref LambdaRoleArn

Code language: YAML (yaml)Specify the aforementioned S3 bucket and the name of the uploaded Zip file in the S3Bucket and S3Key properties. Also, specify “Zip” in the PackageType property. As mentioned above, this is to upload the code as a Zip file.

Environment property allows you to define environment variables that can be referenced in the function. You can pass the values from the CloudFormation side to the function side via the environment variables. In this case, we will pass the name of the parameter stored in the SSM Parameter Store and the region name.

Specify the module name to be executed in the Handler property, and specify the runtime environment in the Runtime property.

In the MemorySize property, specify the memory size to be allocated when executing the Lambda function. Depending on this value, the CPU allocated at the time of function execution will also be determined.

In the Role property, specify the IAM role as described above.

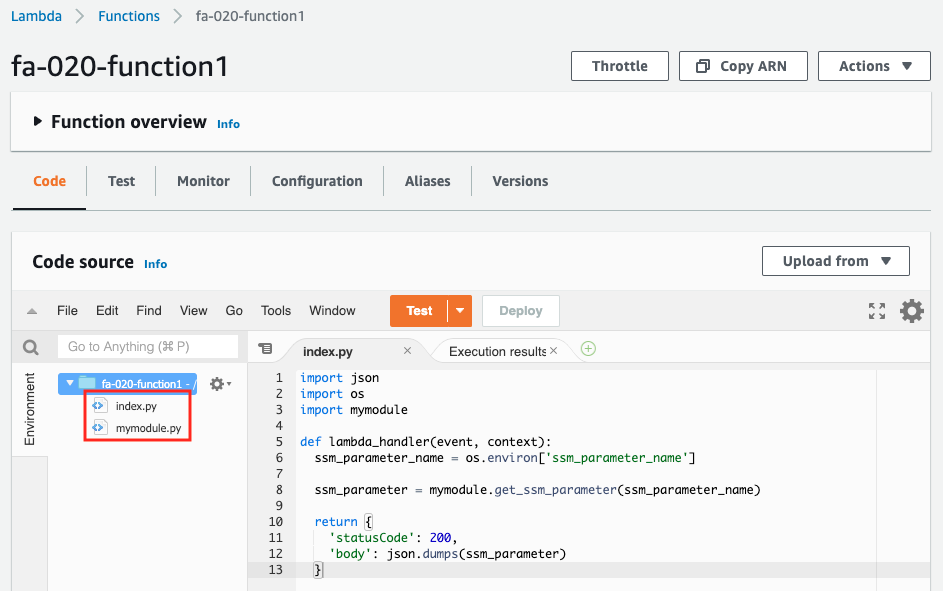

Upload two files.

The first one is mymodule.py.

import boto3

import os

def get_ssm_parameter(parameter_name, client=None):

if not client:

client = boto3.client('ssm', region_name=os.environ['region_name'])

return client.get_parameter(Name=parameter_name)['Parameter']['Value']

Code language: Python (python)The second one is index.py.

import json

import os

import mymodule

def lambda_handler(event, context):

ssm_parameter_name = os.environ['ssm_parameter_name']

ssm_parameter = mymodule.get_ssm_parameter(ssm_parameter_name)

return {

'statusCode': 200,

'body': json.dumps(ssm_parameter)

}

Code language: Python (python)In this way, in addition to the file defining the function body, a separate module file can be uploaded and used.

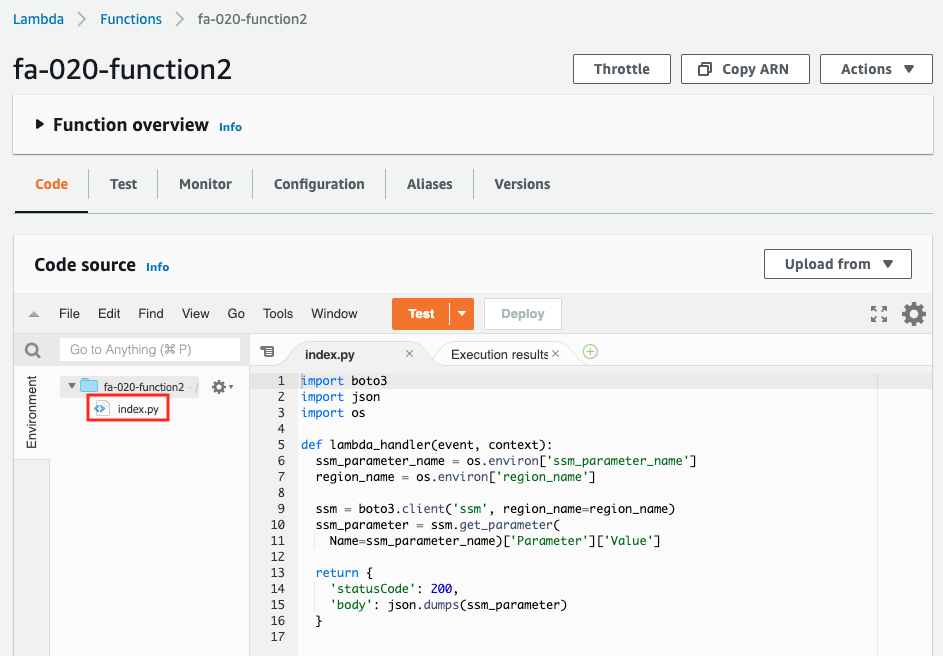

Create Lambda function from code described inline

Check the Lambda function 2. Check how to write code inline and define functions.

Resources:

Function2:

Type: AWS::Lambda::Function

Properties:

Code:

ZipFile: |

import boto3

import json

import os

def lambda_handler(event, context):

ssm_parameter_name = os.environ['ssm_parameter_name']

region_name = os.environ['region_name']

ssm = boto3.client('ssm', region_name=region_name)

ssm_parameter = ssm.get_parameter(

Name=ssm_parameter_name)['Parameter']['Value']

return {

'statusCode': 200,

'body': json.dumps(ssm_parameter)

}

...

Code language: YAML (yaml)Depending on the runtime environment, you may be able to use the ZipFIle property to write the code inline.

(Node.js and Python) The source code of your Lambda function. If you include your function source inline with this parameter, AWS CloudFormation places it in a file named index and zips it to create a deployment package.

AWS::Lambda::Function Code

In this case, we will use Python 3.8 as the runtime environment, so we will satisfy the condition to prepare the code inline. In addition, we will set the Handler property to meet the requirement of a file name for the automatically created function (index.py).

This method of using the ZipFile property is useful when you want to create a simple function because you can describe the code inline.

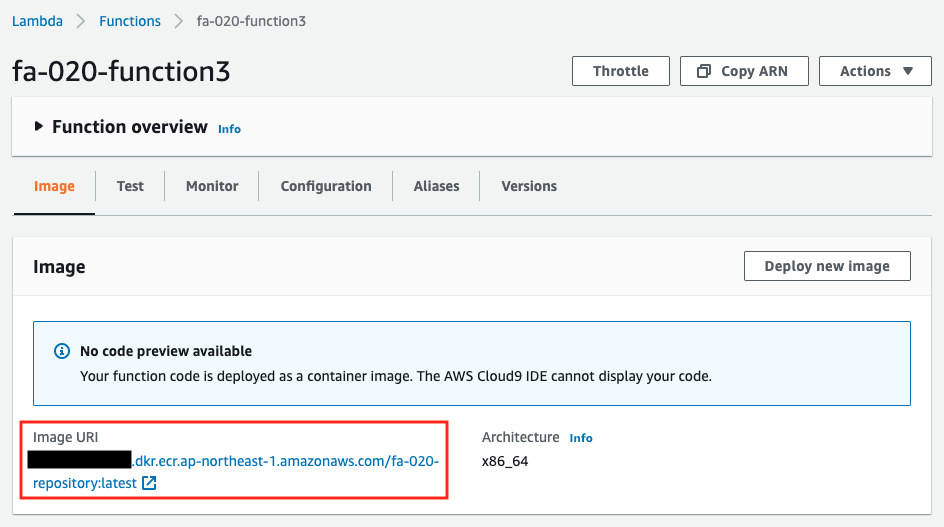

Creating Lambda function from container image

Check the Lambda function 3. Prepare a container image and check how to define a function.

Resources:

Function3:

Type: AWS::Lambda::Function

Properties:

Code:

ImageUri: !Ref ImageUri

...

Code language: YAML (yaml)In the ImageUri property, we specify the ECR repository mentioned earlier.

In the Dockerfile, we define the image to be created.

FROM public.ecr.aws/lambda/python:3.8

COPY index.py ${LAMBDA_TASK_ROOT}

COPY mymodule.py ${LAMBDA_TASK_ROOT}

CMD [ "index.lambda_handler" ]

Code language: Dockerfile (dockerfile)The code to be executed in the container should be identical to the first function. 2. copy the files into the image.

The advantage of the image-type Lambda function is that it supports image sizes up to 10GB. The advantage of the image-type Lambda function is that it supports image sizes up to 10GB, which is a big difference from the usual Lambda function, which has an upper limit of 50MB before compression and 250MB after decompression. For example, if you want to execute a large binary file, you can consider adopting an image-type Lambda function.

Architecting

We will use CloudFormation to build this environment and check its actual behavior. In this article, we will create a CloudFormation stack in two parts.

Create S3 bucket and ECR repository

Before creating the Lambda function, after creating the S3 bucket and ECR repository, we will install the Zip file and push the image.

2Create the resources. The following command is an example of creating a CloudFormation stack from the AWS CLI.

$ aws cloudformation create-stack \

--stack-name fa-020-s3-and-ecr \

--template-url https://[bucket-name].s3.ap-northeast-1.amazonaws.com/fa-020-s3-and-ecr.yaml

Code language: Bash (bash)The information for the two resources created this time is as follows.

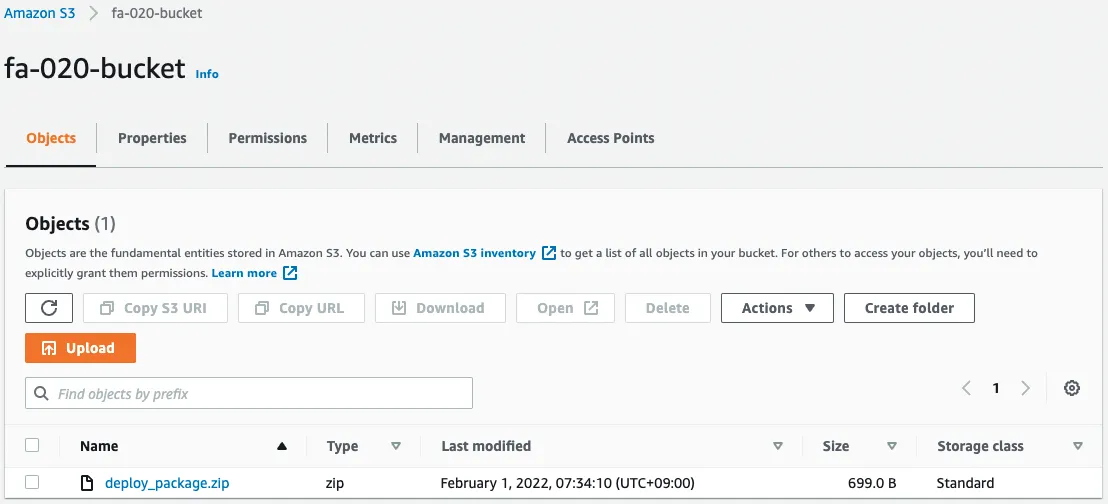

- S3 bucket name: fa-020-bucket

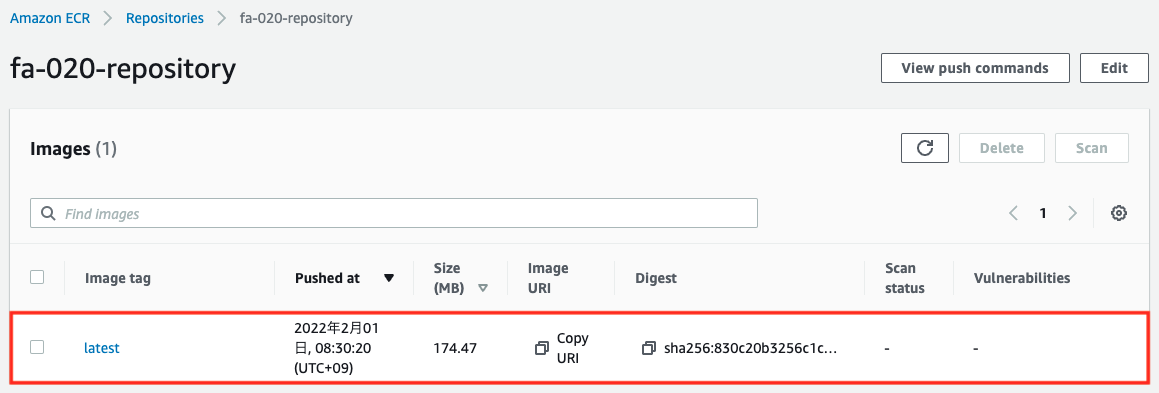

- ECR repository name: fa-020-repository

The first thing to do for the S3 bucket is to zip up the source code and upload it to the bucket.

$ ls

index.py mymodule.py

$ zip deploy_package.zip *

adding: index.py (deflated 51%)

adding: mymodule.py (deflated 39%)

$ ls

deploy_package.zip index.py mymodule.py

$ aws s3 cp deploy_package.zip s3://fa-020-bucket

upload: ./deploy_package.zip to s3://fa-020-bucket/deploy_package.zip

Code language: Bash (bash)The next step is to work on the ECR repository, create an image and push it to the repository. Please refer to the following page for details.

Check the AWS Management Console. First is the S3 bucket.

You can see that the zip file with the compressed source code has been installed. Next is the ECR repository.

You will see that the image you created has been pushed.

Create rest of CloudFormation stack

Create a CloudFormation stack.

Please continue to check the following pages for more information on creating stacks and how to check each stack.

After checking the resources for each stack, the information for the main resource created this time is as follows

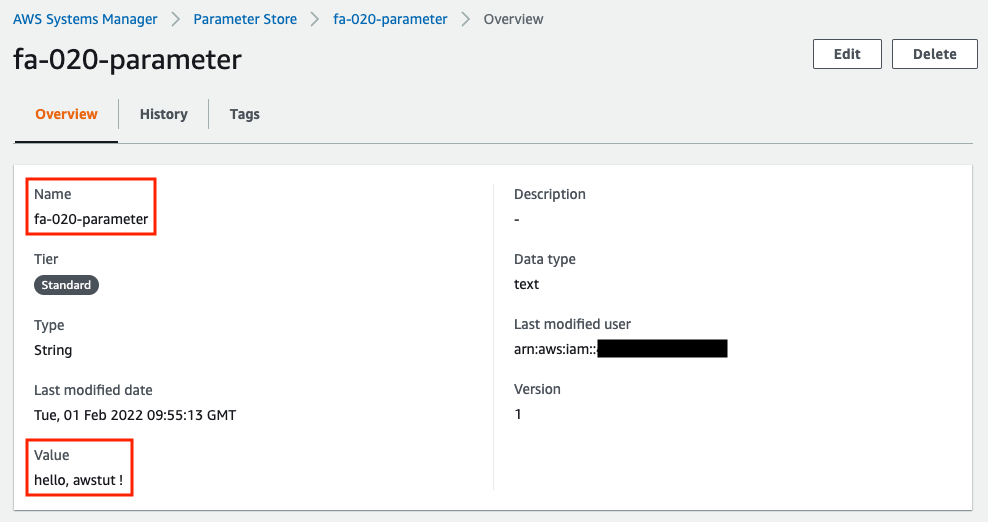

- Name of the SSM parameter: fa-020-parameter

- SSM parameter value: hello, awstut !

- Lambda function 1: fa-020-function1

- Lambda function 2: fa-020-function2

- Lambda function 3: fa-020-function3

We will check the created resources from the AWS Management Console. First, we will check the SSM Parameter Store.

You can see that the parameter “fa-020-parameter” stores the string “hello, awstut ! is stored in the parameter “fa-020-parameter”. Next, let’s check the first Lambda function.

You can see the two source codes, the two files included in the zip file. Next is the second Lambda function.

You can see a single source code. As per the specification mentioned above, the code described inline has been created as a source code named index.py.

You can see that the image has been pulled from the ECR repository and the function has been created.

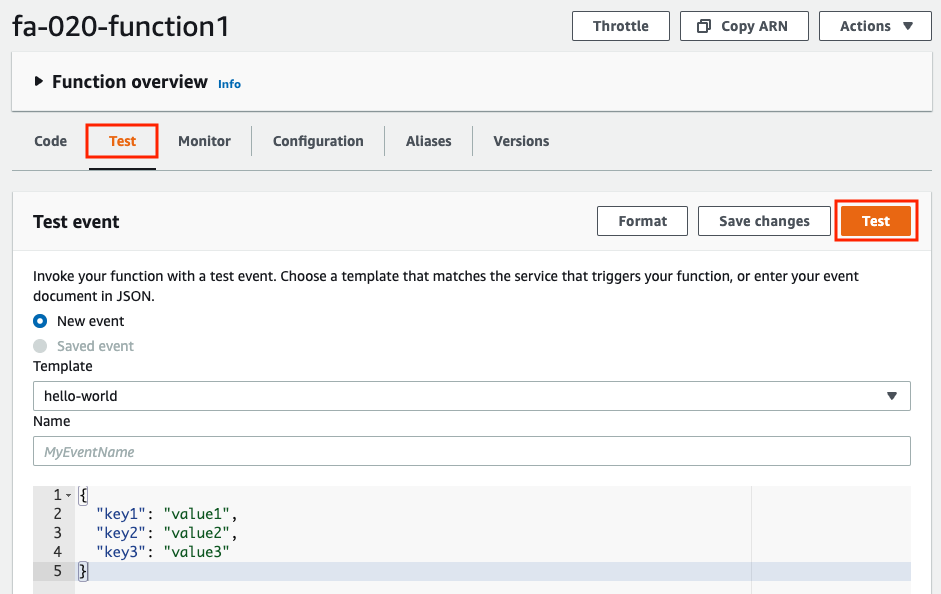

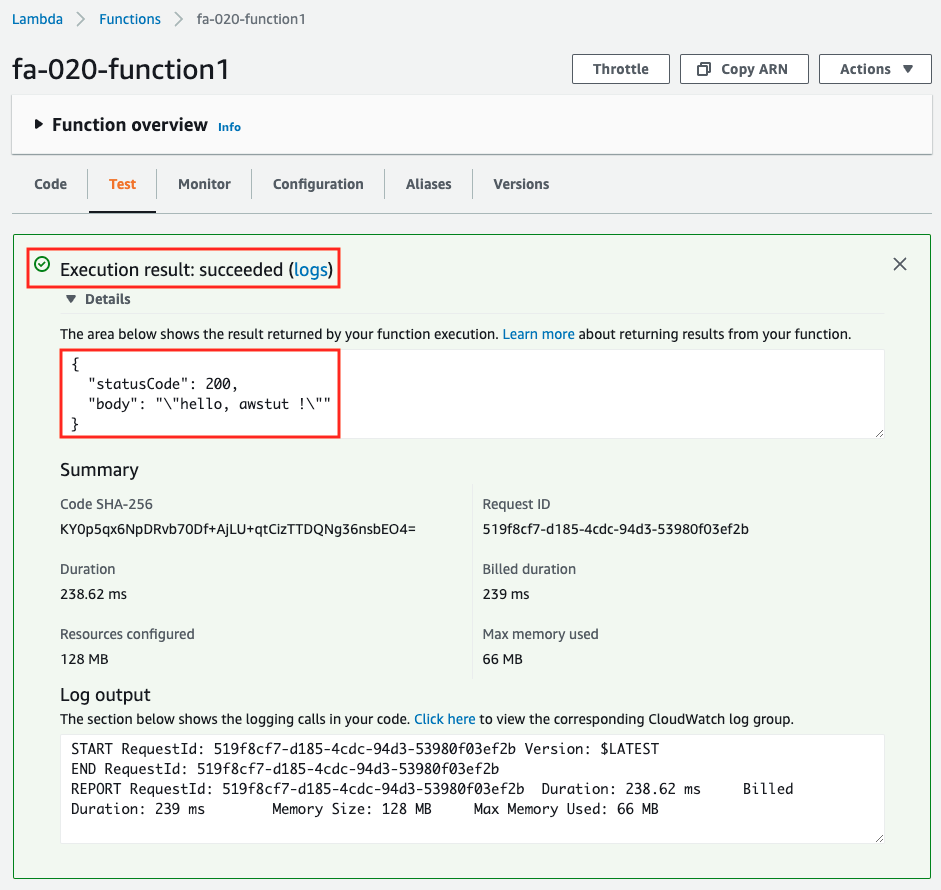

Lambda function execution 1

Now that we are ready, we will execute the functions in order. First is the first function. The function execution is also done from the AWS Management Console.

After selecting the “Test” tab, click the “Test” button. This time, the default Test event is fine.

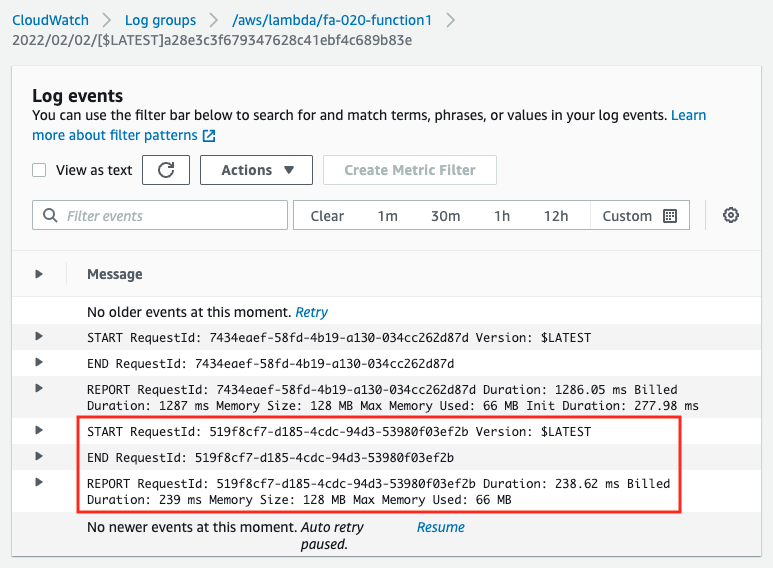

The results will be displayed on the same page. The string “hello, awstut ! string is returned. You can also check the log of the function execution in CloudWatch Logs.

In the CloudFormation template file, we have not defined the CloudWatch Logs log group, but the log group and stream will be created and written automatically.

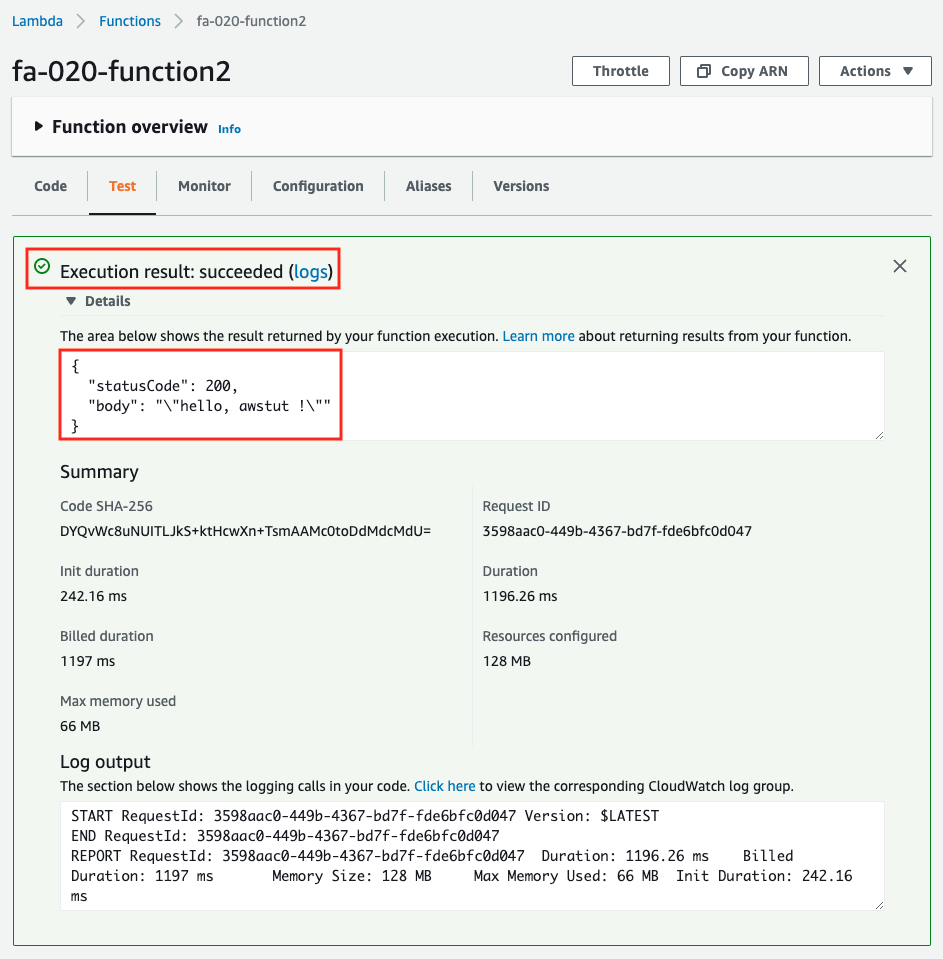

Lambda function execution 2

Next is the second function. Execute it in the same way as before.

It worked fine. The inline defined code worked as well.

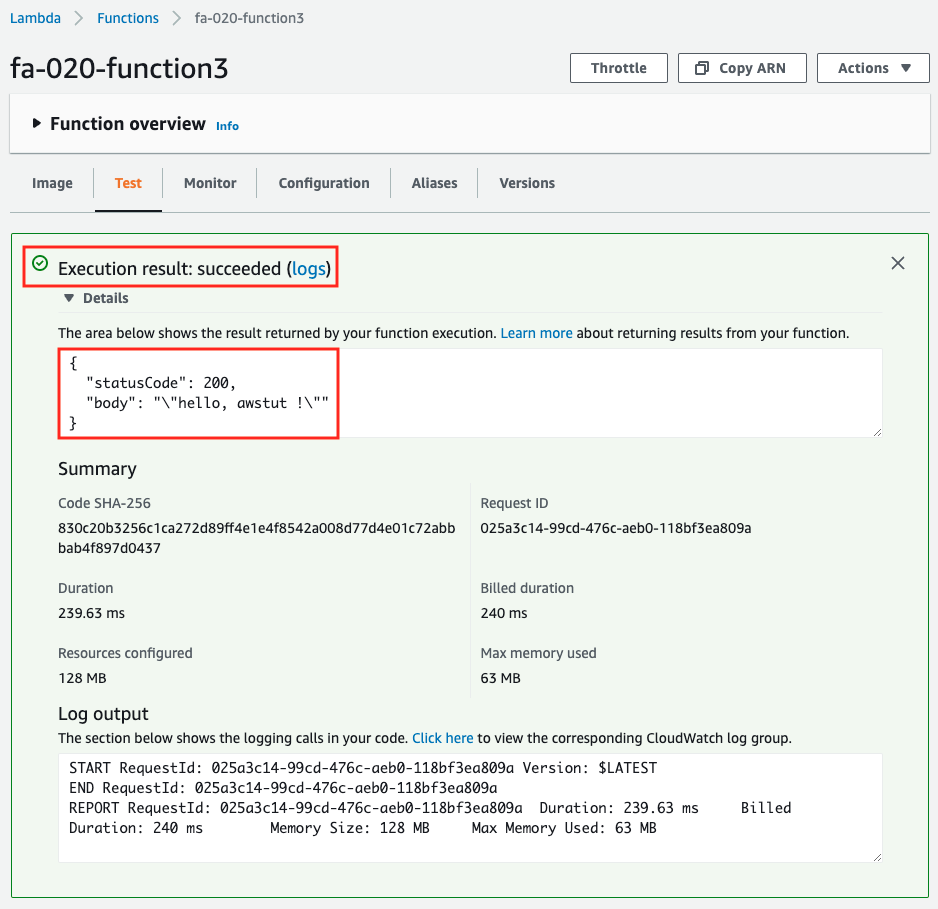

Lambda function execution 3

Finally, we will run the third function.

This also worked fine. The image type also worked fine.

Summary

We have built three patterns for creating Lambda with CloudFormation and checked their behavior.

When uploading source code to an S3 bucket, multiple modules can be zipped and uploaded as a Zip file, which is suitable for full-scale function development.

When writing code inline, there is no need to zip or upload the source code, so it is suitable for simple testing of test code.

For container image type functions, the upper limit of the image size is 10GB, so it is suitable when you want to execute large binary files.