Automatically create and deploy Lambda layer package for Python using CloudFormation custom resources

The following page covers how to create a Lambda layer.

In the above page, the Lambda layer itself is created by CloudFormation, but the packages for the Lambda layer were created manually in advance.

In this case, we will use CloudFormation custom resources to automate the preparation of this package.

Specifically, the custom resource will automate the process of creating the package and placing it in the S3 bucket.

The target runtime environment is Python 3.8 and pip is used.

Environment

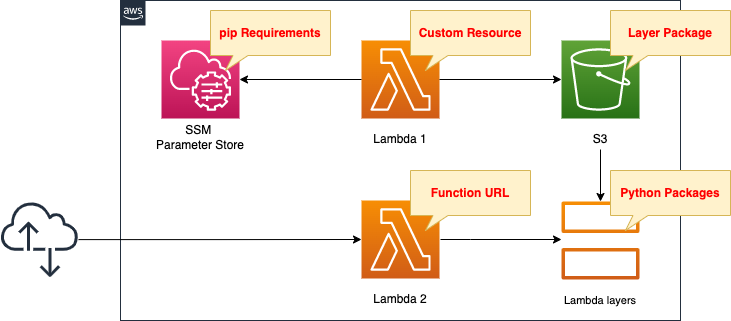

Create two Lambda functions.

In the first function, create a Lambda layer package and place it in an S3 bucket.

Associate the function with a CloudFormation custom resource so that it will automatically run when the CloudFormation stack is created.

Use pip to prepare the library to be included in the layer, but install it by referencing the values registered in the SSM parameter store.

The second function will be used to check the Lambda layer.

Activate the Function URL.

CloudFormation template files

Build the above configuration with CloudFormation.

The CloudFormation templates are located at the following URL

https://github.com/awstut-an-r/awstut-fa/tree/main/064

Explanation of key points of the template files

SSM Parameter Store

Resources:

RequirementsParameter:

Type: AWS::SSM::Parameter

Properties:

Name: !Ref Prefix

Type: String

Value: |

requests

beautifulsoup4

Code language: YAML (yaml)Register the libraries to be installed with pip in the SSM parameter store.

As described later, create requirements.txt from this string and install the libraries in bulk.

CloudFormation Custom Resource

First, check the Lambda functions to be executed in the custom resource.

Resources:

Function1:

Type: AWS::Lambda::Function

Properties:

Architectures:

- !Ref Architecture

Environment:

Variables:

LAYER_PACKAGE: !Ref LayerPackage

REGION: !Ref AWS::Region

REQUIREMENTS_PARAMETER: !Ref RequirementsParameter

S3_BUCKET: !Ref CodeS3Bucket

S3_BUCKET_FOLDER: !Ref Prefix

Code:

ZipFile: |

...

EphemeralStorage:

Size: !Ref EphemeralStorageSize

FunctionName: !Sub "${Prefix}-function1"

Handler: !Ref Handler

Runtime: !Ref Runtime

Role: !GetAtt FunctionRole1.Arn

Timeout: !Ref Timeout

Code language: YAML (yaml)The Architecture and Runtime properties are the key points.

These properties must match the values specified in the Lambda layer resource described below.

In this case, we set them as follows.

- Architecture: arm64

- Runtime: python3.8

EphemeralStorage and Timeout properties are also parameters that need to be adjusted.

Depending on the capacity and number of libraries to be included in the Lambda layer, these values may need to be set higher.

In this case, we set them as follows

- EphemeralStorage: 512

- Timeout: 300

The Environment property allows you to define environment variables that can be passed to the function.

You can pass the file name of the package to be created, the parameter name of the SSM parameter store, and information about the S3 bucket where the package will be placed.

Define the code to be executed by the Lambda function in inline notation.

For more information, please refer to the following page

Here is the code to execute

import boto3

import cfnresponse

import os

import pip

import shutil

import subprocess

layer_package = os.environ['LAYER_PACKAGE']

region = os.environ['REGION']

requirements_parameter = os.environ['REQUIREMENTS_PARAMETER']

s3_bucket = os.environ['S3_BUCKET']

s3_bucket_folder = os.environ['S3_BUCKET_FOLDER']

CREATE = 'Create'

response_data = {}

work_dir = '/tmp'

requirements_file = 'requirements.txt'

package_dir = 'python'

requirements_path = os.path.join(work_dir, requirements_file)

package_dir_path = os.path.join(work_dir, package_dir)

layer_package_path = os.path.join(work_dir, layer_package)

def lambda_handler(event, context):

try:

if event['RequestType'] == CREATE:

ssm_client = boto3.client('ssm', region_name=region)

ssm_response = ssm_client.get_parameter(Name=requirements_parameter)

requirements = ssm_response['Parameter']['Value']

with open(requirements_path, 'w') as file_data:

print(requirements, file=file_data)

pip.main(['install', '-t', package_dir_path, '-r', requirements_path])

shutil.make_archive(

os.path.splitext(layer_package_path)[0],

format='zip',

root_dir=work_dir,

base_dir=package_dir

)

s3_resource = boto3.resource('s3')

bucket = s3_resource.Bucket(s3_bucket)

bucket.upload_file(

layer_package_path,

'/'.join([s3_bucket_folder, layer_package])

)

cfnresponse.send(event, context, cfnresponse.SUCCESS, response_data)

except Exception as e:

print(e)

cfnresponse.send(event, context, cfnresponse.FAILED, response_data)

Code language: Python (python)Use the cfnresponse module to implement functions as Lambda-backed custom resources.

For more information, please see the following page

The code to be executed is as follows.

- retrieve the environment variables defined in the CloudFormation template by accessing os.environ.

- retrieve the SSM parameter store values in Boto3 and create requirements.txt file.

- specify requirements.txt in pip and install all libraries at once.

- make a ZIP file of the installed libraries with shutil.make_archive.

- Upload the ZIP file to the S3 bucket with Boto3.

By the way, the IAM role for the function is as follows

Resources:

FunctionRole1:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action: sts:AssumeRole

Principal:

Service:

- lambda.amazonaws.com

ManagedPolicyArns:

- arn:aws:iam::aws:policy/service-role/AWSLambdaBasicExecutionRole

Policies:

- PolicyName: CreateLambdaLayerPackagePolicy

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- ssm:GetParameter

Resource:

- !Sub "arn:aws:ssm:${AWS::Region}:${AWS::AccountId}:parameter/${RequirementsParameter}"

- Effect: Allow

Action:

- s3:PutObject

Resource:

- !Sub "arn:aws:s3:::${CodeS3Bucket}/*"

Code language: YAML (yaml)In addition to the AWS management policy AWSLambdaVPCAccessExecutionRole, we grant permission to retrieve parameters from the SSM parameter store and upload objects to the S3 bucket.

Then check the CloudFormation custom resource body.

Resources:

CustomResource:

Type: Custom::CustomResource

Properties:

ServiceToken: !GetAtt Function1.Arn

Code language: YAML (yaml)Specify the Lambda function described above.

Lambda Layer

Resources:

LambdaLayer:

Type: AWS::Lambda::LayerVersion

DependsOn:

- CustomResource

Properties:

CompatibleArchitectures:

- !Ref Architecture

CompatibleRuntimes:

- !Ref Runtime

Content:

S3Bucket: !Ref CodeS3Bucket

S3Key: !Ref LayerS3Key

Description: !Ref Prefix

LayerName: !Ref Prefix

Code language: YAML (yaml)There are three key points.

The first is when this resource is created.

This resource must be created after the aforementioned Lambda function is executed.

Therefore, we specify a custom resource in DependsOn.

The second is the CompatibleArchitectures and CompatibleRuntimes properties.

These should be set to the same values as those specified in the Lambda function described above.

The third is the Content property.

Specify the ZIP file uploaded by the aforementioned Lambda function.

(Reference) Lambda function for confirmation

Resources:

Function2:

Type: AWS::Lambda::Function

Properties:

Architectures:

- !Ref Architecture

Code:

ZipFile: |

import json

import requests

from bs4 import BeautifulSoup

url = 'https://news.google.com/rss'

def lambda_handler(event, context):

r = requests.get(url)

soup = BeautifulSoup(r.text, 'lxml')

titles = [item.find('title').getText() for item in soup.find_all('item')]

return {

'statusCode': 200,

'body': json.dumps(titles, indent=2)

}

FunctionName: !Sub "${Prefix}-function2"

Handler: !Ref Handler

Layers:

- !Ref LambdaLayer

Runtime: !Ref Runtime

Role: !GetAtt FunctionRole2.Arn

Code language: YAML (yaml)Since this is a confirmation function, no special settings are required.

Specify the aforementioned Lambda layer in the Layers property.

The import statement imports the libraries included in the layer.

Checking the Operation, you can confirm that the layer package creation and Lambda layer resource creation have been successfully completed.

The content of the code is to use requests and BeautifulSoup included in the layer to retrieve RSS of Google News and return the title of each item.

Architecting

Using CloudFormation, we will build this environment and check the actual behavior.

Create CloudFormation stacks and check resources in stacks

Create a CloudFormation stacks.

For information on how to create stacks and check each stack, please refer to the following page

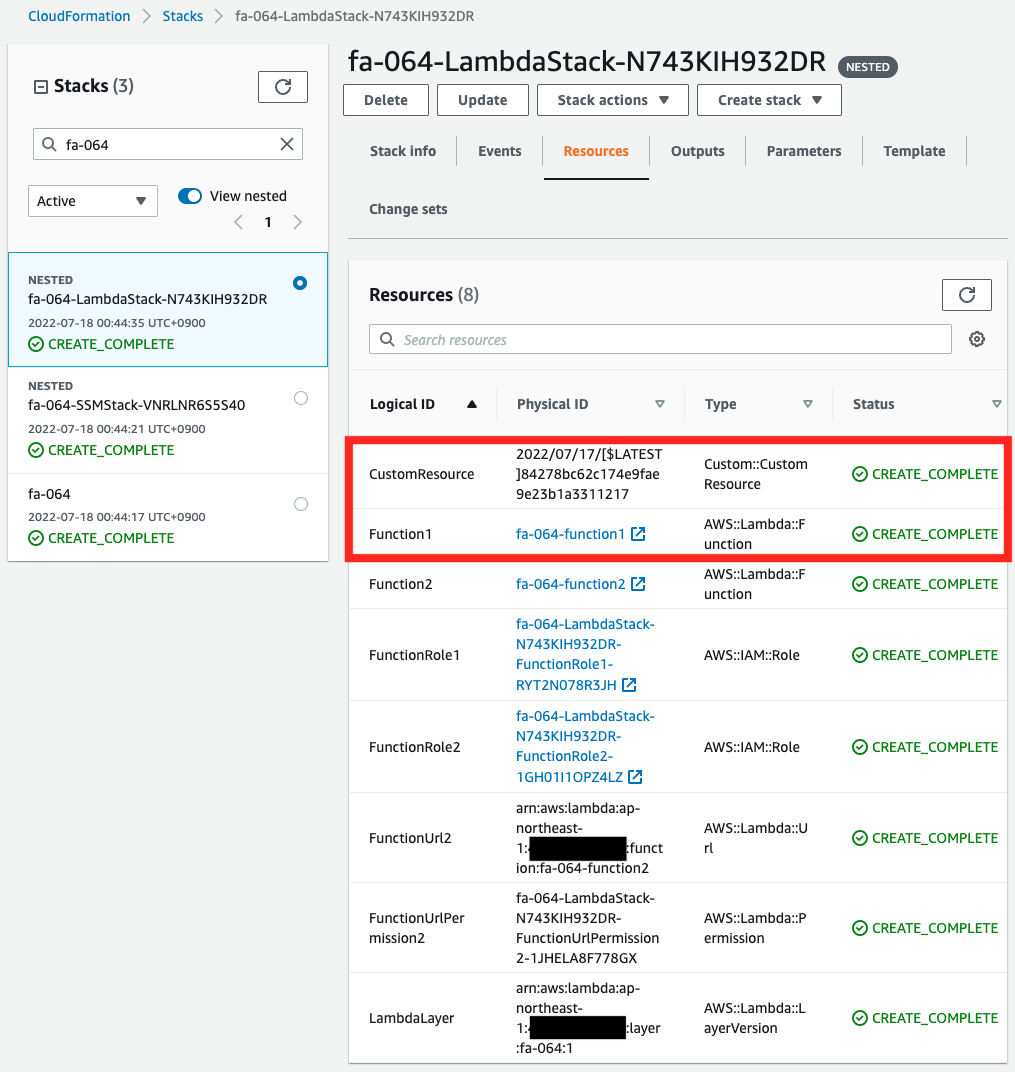

After checking the resources in each stack, information on the main resources created this time is as follows

- Lambda function 1: fa-064-function1

- Lambda function 2: fa-064-function2

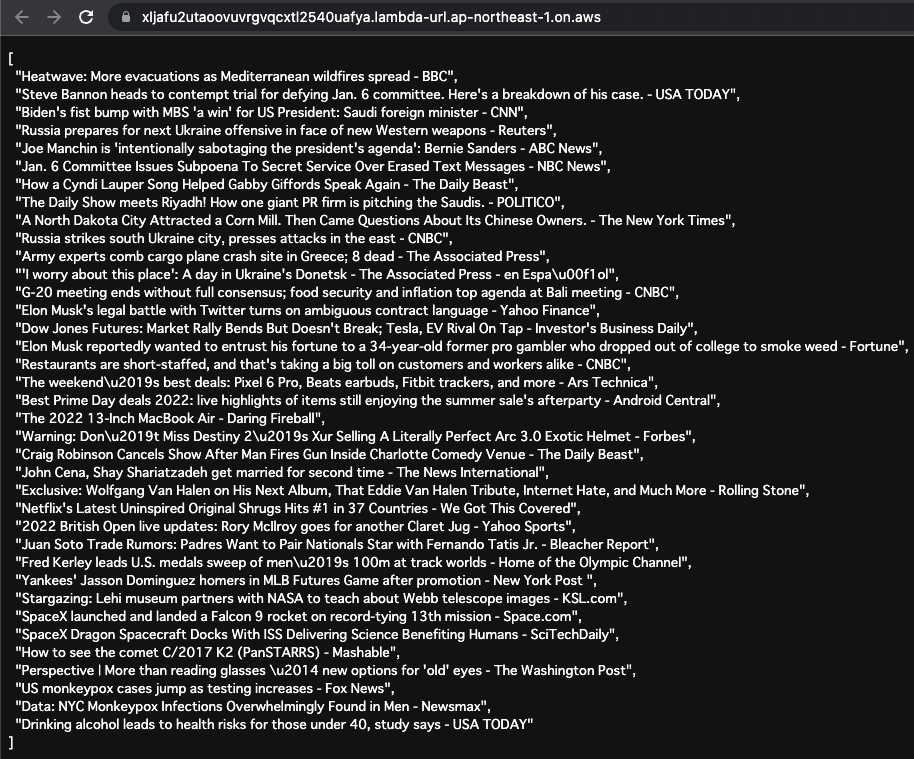

- Function URL for Lambda function 2: https://xljafu2utaoovuvrgvqcxtl2540uafya.lambda-url.ap-northeast-1.on.aws/

- Parameters in SSM parameter store: fa-064

- S3 bucket and folder to store packages for Lambda layer: awstut-bucket/fa-064

Check each resource from the AWS Management Console.

First, check the CloudFormation custom resource.

You can see that the Lambda function and the custom resource itself have been successfully created.

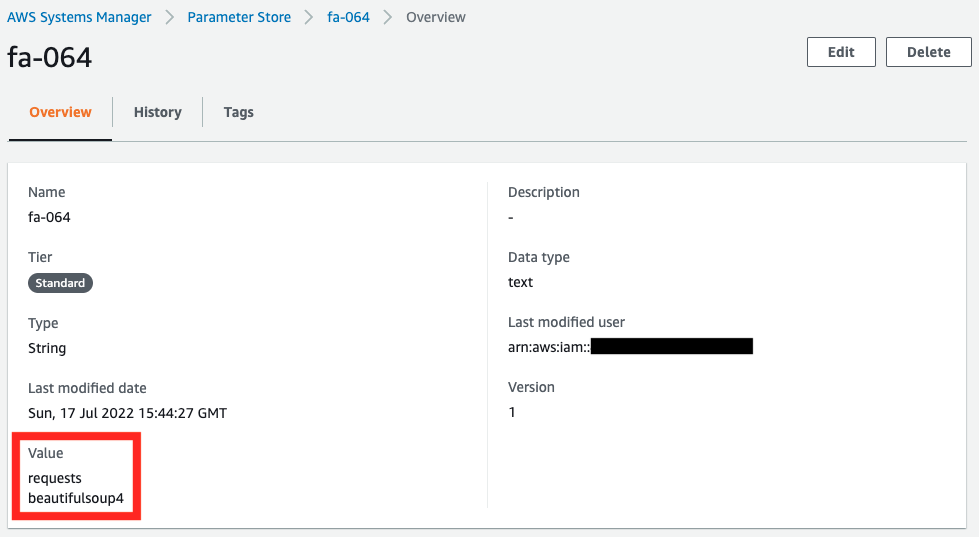

Next, check the values stored in the SSM parameter store.

You can see that the contents of requirements.txt are stored.

Checking Action

Now that everything is ready, let’s check the Operation.

Execution result of Lambda function

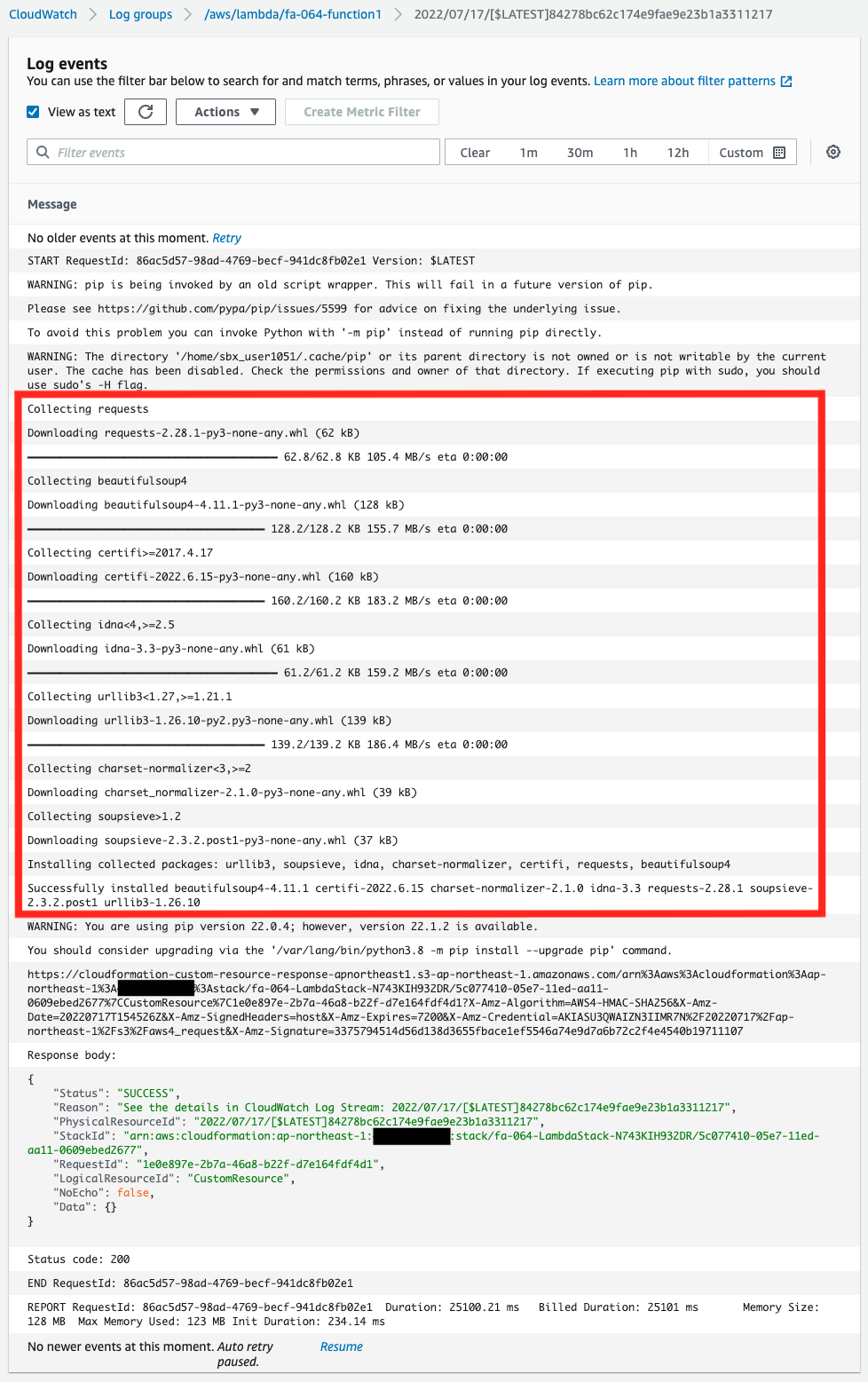

First, check the execution result of the Lambda function associated with the CloudFormation custom resource in the CloudWatch Logs log group.

From the log, we can see that the library was installed according to the contents of requirements.txt stored in the SSM parameter store.

This means that the Lambda layer package was successfully created.

We can also see that the function returns “SUCCESS” as a CloudFormation custom resource.

This means that the function has successfully acted as a custom resource.

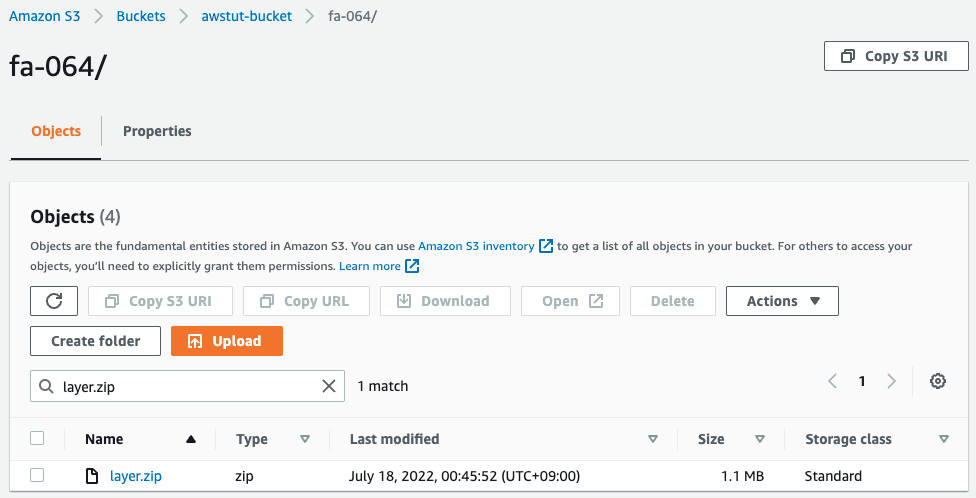

S3 Bucket

Next, we access the S3 bucket to check the installation status of the Lambda layer package.

Indeed, the Lambda layer package (layer.zip) has been installed in the S3 bucket.

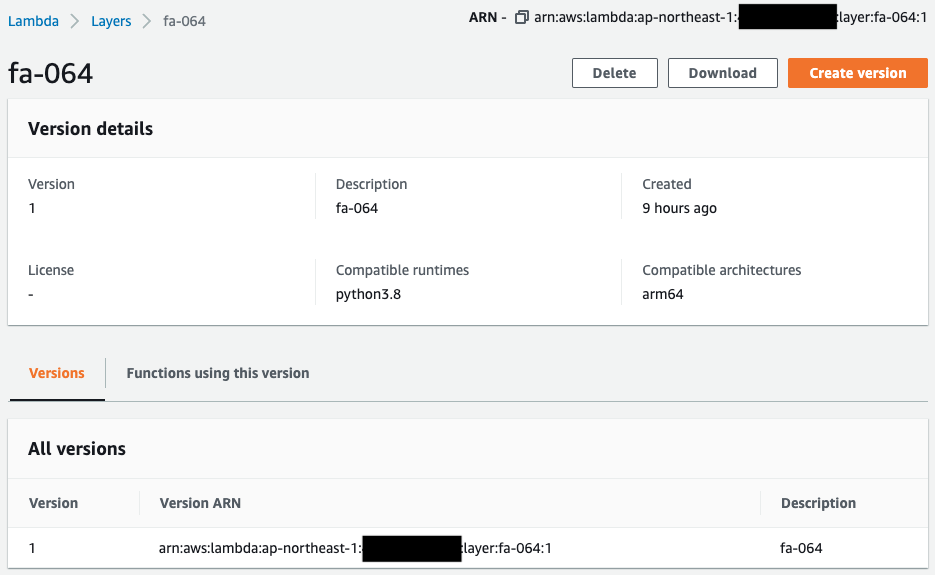

Lambda Layer

Check the creation status of the Lambda layer.

The architecture and runtime are configured as specified in the CloudFormation template.

This layer should have been created by referencing the zip file on the S3 bucket mentioned above.

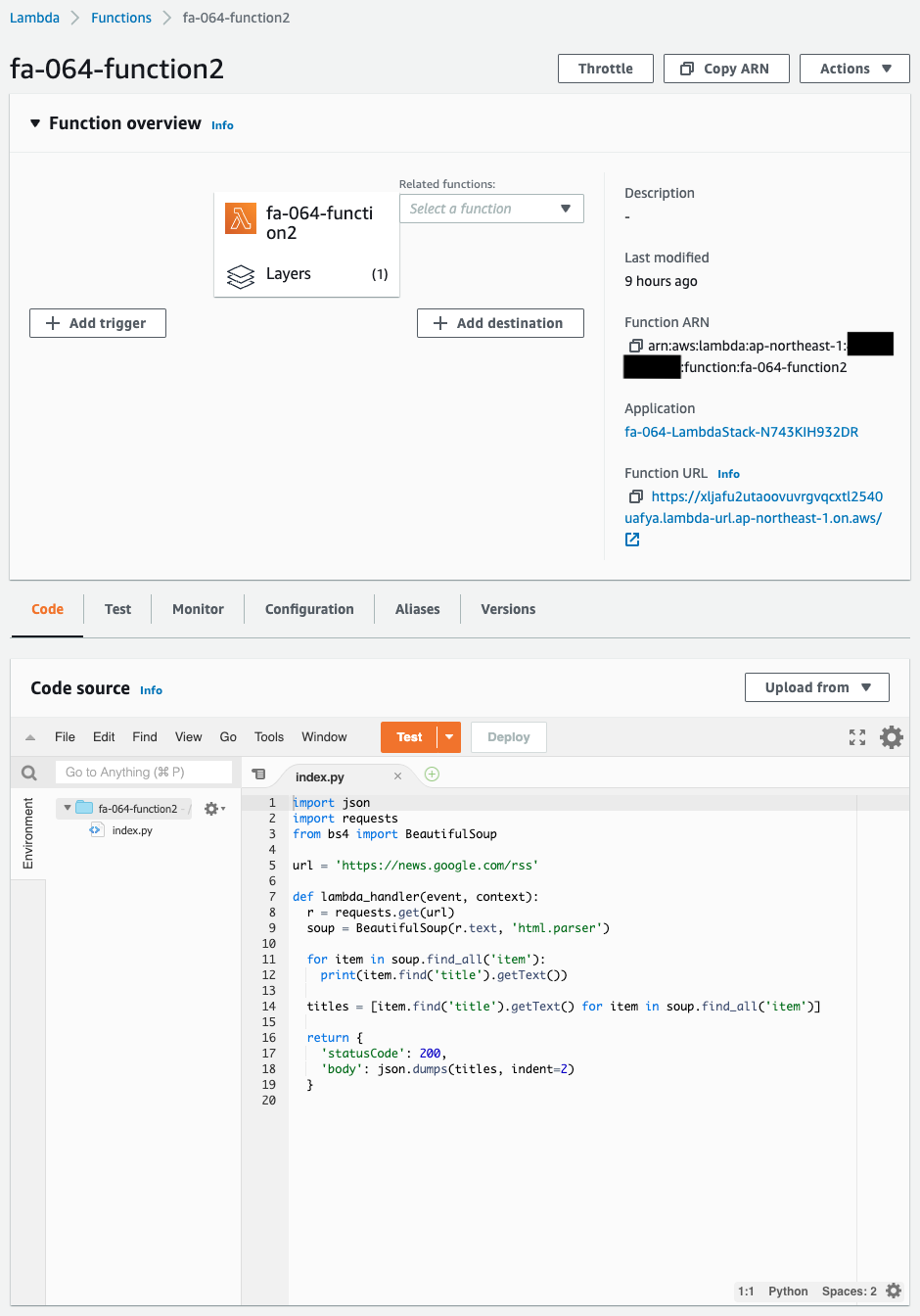

Confirmation Lambda Function

Check the creation status of the confirmation Lambda function.

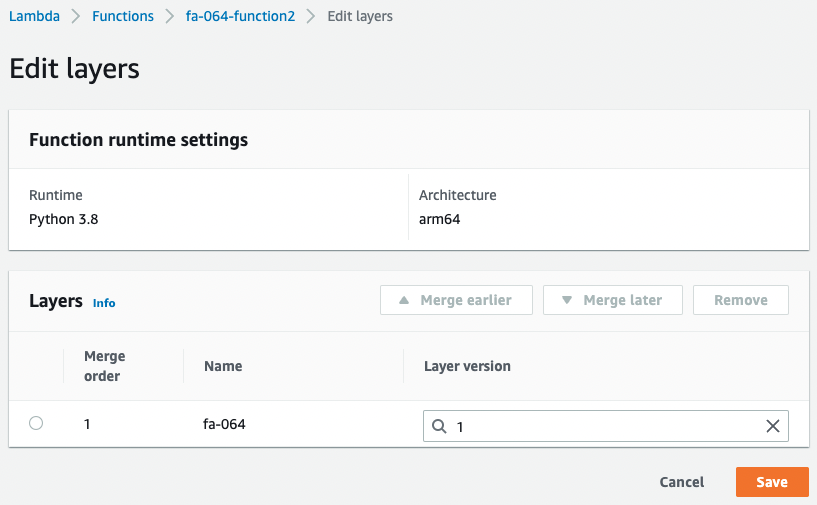

You can see that there is a Lambda layer associated with it.

Below are the details of the layers.

You can see that the aforementioned Lambda layer is associated.

Now that we are ready, we access the Function URL of this function.

For more information on Function URL, please refer to the following page.

You have successfully obtained RSS information from Google News.

We can see that we can use requests and BeautifulSoup, which are included in the Lambda layer associated with this function.

This confirms that we were able to automatically prepare a Lambda layer package for Python by using CloudFormation custom resources.

Summary

We have confirmed how to automate the process of creating a Lambda layer package for Python and placing it in an S3 bucket using a custom resource.