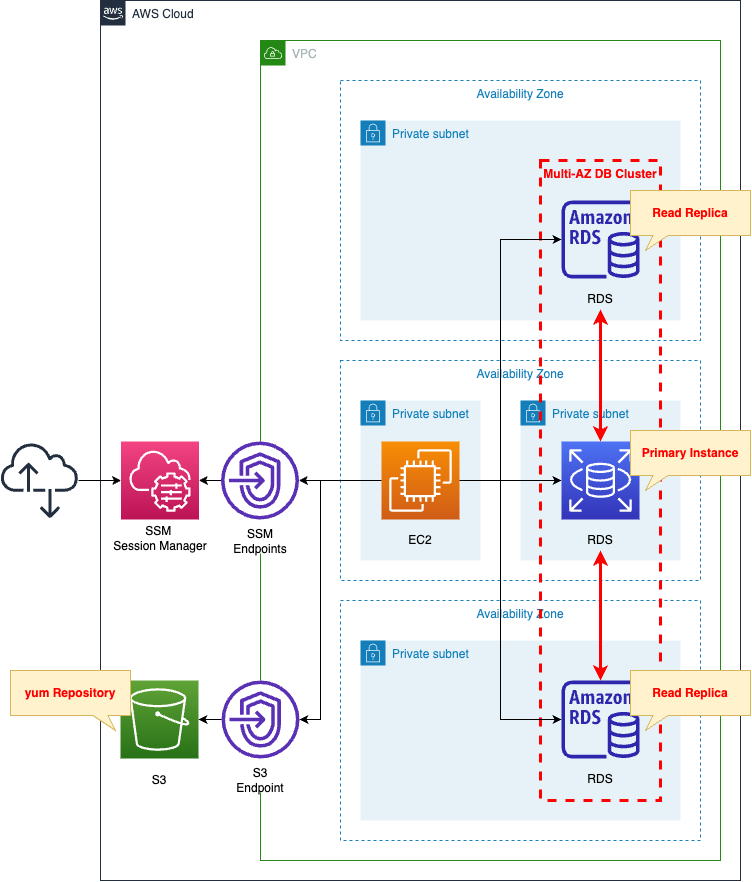

Using CloudFormation to Create an RDS with a Multi-AZ DB Cluster Configuration

The following page covers multi-AZ deployment.

Multi-AZ DB clusters were released in March 2022.

A Multi-AZ DB cluster deployment is a high availability deployment mode of Amazon RDS with two readable standby DB instances.

Multi-AZ DB cluster deployments

In this page, we will create a multi-AZ DB cluster using CloudFormation.

Environment

Create a multi-AZ DB cluster.

The cluster will be MySQL type.

Create an EC2 instance.

Use it as a client to connect to the DB cluster.

The instance is the latest version of Amazon Linux 2.

Create two types of VPC endpoints.

The first is for SSM.

This is to connect to an EC2 instance in a private subnet using SSM Session Manager.

The second is for S3.

This is for accessing yum repositories built on the S3 bucket.

CloudFormation template files

The above configuration is built with CloudFormation.

The CloudFormation templates are located at the following URL

https://github.com/awstut-an-r/awstut-fa/tree/main/112

Explanation of key points of the template files

RDS

DB Subnet Group

Resources:

DBSubnetGroup:

Type: AWS::RDS::DBSubnetGroup

Properties:

DBSubnetGroupName: !Sub "${Prefix}-dbsubnetgroup"

DBSubnetGroupDescription: Test DB SubnetGroup.

SubnetIds:

- !Ref DBSubnet1

- !Ref DBSubnet2

- !Ref DBSubnet3

Code language: YAML (yaml)RDS subnet group.

The point is the number of subnets to associate.

The DB subnet group that you choose for the DB cluster must cover at least three Availability Zones. This configuration ensures that each DB instance in the DB cluster is in a different Availability Zone.

Creating a Multi-AZ DB cluster

Follow the citation above and set up three subnets in the subnet group.

DB Cluster

Resources:

DBCluster:

Type: AWS::RDS::DBCluster

DeletionPolicy: Delete

Properties:

AllocatedStorage: !Ref DBAllocatedStorage

AutoMinorVersionUpgrade: true

DatabaseName: !Ref DBName

DBClusterIdentifier: !Sub "${Prefix}-dbcluster"

DBClusterInstanceClass: !Ref DBClusterInstanceClass

DBClusterParameterGroupName: !Ref DBClusterParameterGroup

DBSubnetGroupName: !Ref DBSubnetGroup

Engine: !Ref DBEngine

EngineVersion: !Ref DBEngineVersion

Iops: !Ref DBIops

MasterUsername: !Ref DBMasterUsername

MasterUserPassword: !Ref DBMasterUserPassword

Port: !Ref DBPort

StorageType: io1

VpcSecurityGroupIds:

- !Ref DBSecurityGroup

Code language: YAML (yaml)Points will be covered.

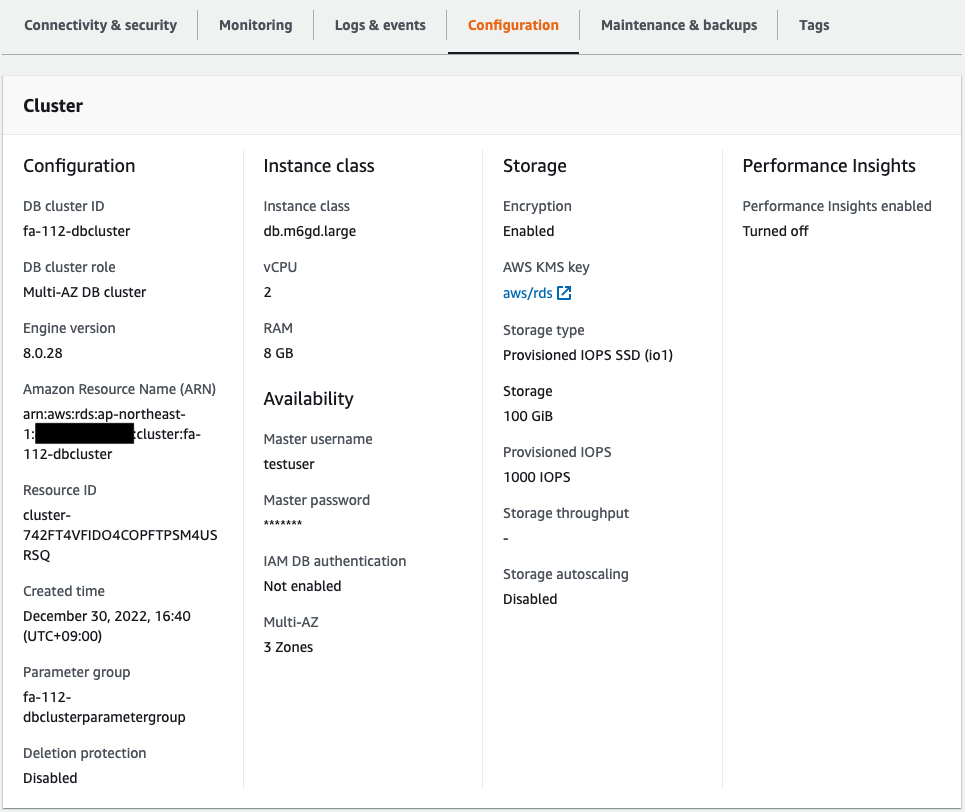

Storage type is point.

Multi-AZ DB clusters only support Provisioned IOPS storage.

Limitations for Multi-AZ DB clusters

Follow the quote above and specify “io1” for the StorageType property.

The storage and IOPS to be allocated are also important.

According to the official AWS page, for MySQL type, the possible values for both parameters are as follows

- Storage: 100 GiB – 64 TiB

- Provisioned IOPS: 1,000-256,000 IOPS

So this time we specify “100 (GiB)” for the AllocatedStorage property and “1000 (IOPS)” for the Iops property.

Specify the instance class in DBClusterInstanceClass.

The instance classes that can be specified for a multi-AZ DB cluster are “db.m6gd” or “db.m5d”.

Therefore, we will specify “db.m6gd.large,” which is the smallest size of the former.

Specify the engine type of the DB cluster to be created in the Engine and EngineVersion properties.

Multi-AZ DB clusters support two types of DB engines.

Multi-AZ DB clusters are supported only for the MySQL and PostgreSQL DB engines.

Creating a Multi-AZ DB cluster

As quoted above, specify “mysql” for the former and “8.0.28” for the latter.

(Reference) EC2 instance

Resources:

Instance:

Type: AWS::EC2::Instance

Properties:

IamInstanceProfile: !Ref InstanceProfile

ImageId: !Ref ImageId

InstanceType: !Ref InstanceType

NetworkInterfaces:

- DeviceIndex: 0

SubnetId: !Ref InstanceSubnet

GroupSet:

- !Ref InstanceSecurityGroup

UserData: !Base64 |

#!/bin/bash -xe

yum update -y

yum install -y mariadb

Code language: YAML (yaml)To access a DB instance from an EC2 instance, a client package must be prepared.

In this case, we will use user data to install the package.

For more information on user data, see the following page

For more information about client packages to connect to various RDS from Amazon Linux 2, please refer to the following page

Architecting

Use CloudFormation to build this environment and check the actual behavior.

Create a CloudFormation stacks and check the resources in the stacks

Create CloudFormation stacks.

For information on how to create stacks and check each stack, please refer to the following page

After checking the resources in each stack, information on the main resources created this time is as follows

- EC2 instance: i-0c11a6ebbe7a679c

- DB cluster: fa-112-dbcluster

- Endpoint for writing DB cluster: fa-112-dbcluster.cluster-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

- DB cluster read endpoint: fa-112-dbcluster.cluster-ro-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Check each resource from the AWS Management Console.

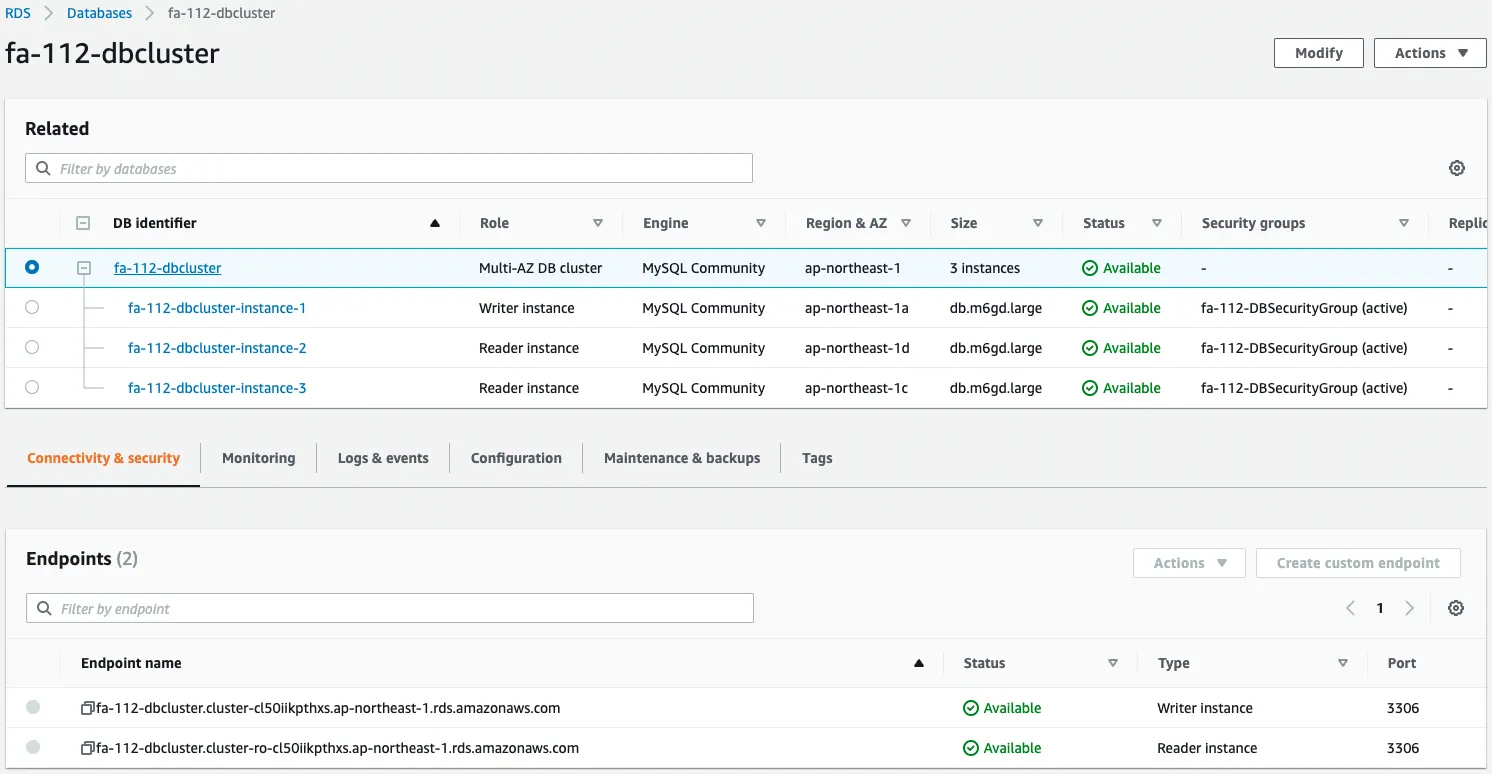

Check the DB cluster.

The DB cluster has been successfully created.

Three DB instances have been created in the cluster, one in each of the three AZs.

You can also see that instance 1 is for writing and the other two instances (instance 2 and instance 3) are for reading.

We can also see that two types of endpoints are prepared.

One is for writing and the other is for reading.

We also check the configuration details.

The instance class, storage, IOPS, etc. are set as specified in the CloudFormation template.

Checking the Action

Connect to the DB cluster

Connect to the primary instance from an EC2 instance.

Use SSM Session Manager to access the EC2 instance.

% aws ssm start-session --target i-0c11a6ebbe7a67b9c

...

sh-4.2$

Code language: Bash (bash)For more information on SSM Session Manager, please see the following page

Name resolution for two types of endpoints in a DB cluster.

sh-4.2$ nslookup fa-112-dbcluster.cluster-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Server: 10.0.0.2

Address: 10.0.0.2#53

Non-authoritative answer:

fa-112-dbcluster.cluster-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com canonical name = fa-112-dbcluster-instance-1.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com.

Name: fa-112-dbcluster-instance-1.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Address: 10.0.2.137

sh-4.2$ nslookup fa-112-dbcluster.cluster-ro-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Server: 10.0.0.2

Address: 10.0.0.2#53

Non-authoritative answer:

fa-112-dbcluster.cluster-ro-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com canonical name = fa-112-dbcluster-instance-3.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com.

Name: fa-112-dbcluster-instance-3.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Address: 10.0.3.101

Code language: Bash (bash)From the name resolution status, we can see that the endpoint for writing can connect to instance 1 and the endpoint for reading can connect to instance 3.

Check the execution status of the EC2 instance initialization process by user data.

sh-4.2$ sudo yum list installed | grep mariadb

mariadb.aarch64 1:5.5.68-1.amzn2 @amzn2-core

mariadb-libs.aarch64 1:5.5.68-1.amzn2 installed

sh-4.2$ mysql -V

mysql Ver 15.1 Distrib 5.5.68-MariaDB, for Linux (aarch64) using readline 5.1

Code language: Bash (bash)You will see that the MySQL client package has been successfully installed.

Use this client package to connect to the write endpoint.

sh-4.2$ mysql -h fa-112-dbcluster.cluster-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com -P 3306 -u testuser -D testdb -p

Enter password:

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MySQL connection id is 26

Server version: 8.0.28 Source distribution

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MySQL [testdb]>

Code language: Bash (bash)The connection has been made.

Create a test table and save test data.

MySQL [testdb]> CREATE TABLE planet (id INT UNSIGNED AUTO_INCREMENT, name VARCHAR(30), PRIMARY KEY(id));

MySQL [testdb]> INSERT INTO planet (name) VALUES ("Mercury");

MySQL [testdb]> select * from planet;

+----+---------+

| id | name |

+----+---------+

| 1 | Mercury |

+----+---------+

Code language: Bash (bash)It worked fine.

Next, connect to the read endpoint.

sh-4.2$ mysql -h fa-112-dbcluster.cluster-ro-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com -P 3306 -u testuser -D testdb -p

...

MySQL [testdb]>

Code language: Bash (bash)This connection was also successful.

MySQL [testdb]> select * from planet;

+----+---------+

| id | name |

+----+---------+

| 1 | Mercury |

+----+---------+

MySQL [testdb]> INSERT INTO planet (name) VALUES ("Venus");

ERROR 1290 (HY000): The MySQL server is running with the --read-only option so it cannot execute this statement

Code language: Bash (bash)The read was successful.

The contents written to instance 1, the primary instance, are also reflected in instance 3, the read replica.

On the other hand, write failed.

This is because instance 3 is a read-only instance.

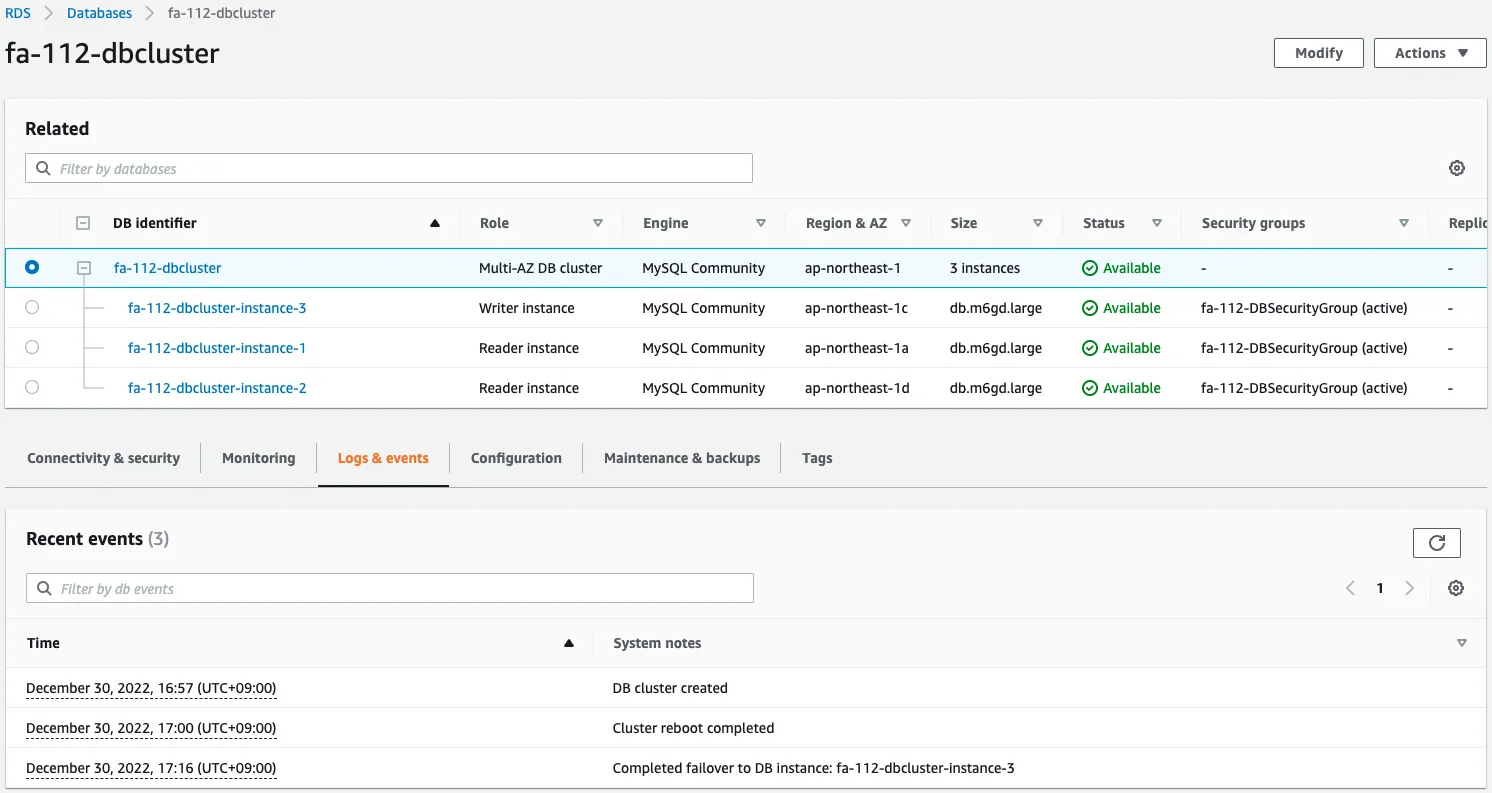

Failover

Normally, when a write instance stops, it automatically fails over to the read instance.

In this case, we will use the AWS CLI to manually failover.

$ aws rds failover-db-cluster \

--db-cluster-identifier fa-112-dbcluster

Code language: Bash (bash)Check the status of the DB cluster again.

The failover resulted in instance 3 becoming a writer instance and the other two instances becoming reader instances.

The event log shows that instance 3 has been promoted to writer.

Once again, we also check the name resolution status of each endpoint.

sh-4.2$ nslookup fa-112-dbcluster.cluster-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Server: 10.0.0.2

Address: 10.0.0.2#53

Non-authoritative answer:

fa-112-dbcluster.cluster-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com canonical name = fa-112-dbcluster-instance-3.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com.

Name: fa-112-dbcluster-instance-3.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Address: 10.0.3.101

sh-4.2$ nslookup fa-112-dbcluster.cluster-ro-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Server: 10.0.0.2

Address: 10.0.0.2#53

Non-authoritative answer:

fa-112-dbcluster.cluster-ro-cl50iikpthxs.ap-northeast-1.rds.amazonaws.com canonical name = fa-112-dbcluster-instance-1.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com.

Name: fa-112-dbcluster-instance-1.cl50iikpthxs.ap-northeast-1.rds.amazonaws.com

Address: 10.0.2.137

Code language: Bash (bash)We can see that the write endpoint can connect to instance 3 and the read endpoint can connect to instance 1.

Conclusion

We have created a Multi-AZ DB Cluster using CloudFormation.