Define action in CodePipeline calls Lambda function to change desired number of Fargate tasks

CodePipeline can be configured with a variety of actions, but this time we will consider calling a Lambda function within the pipeline.

This time, we will call a Lambda function in the pipeline to change the desired number of ECS tasks in the ECS (Fargate) service.

Environment

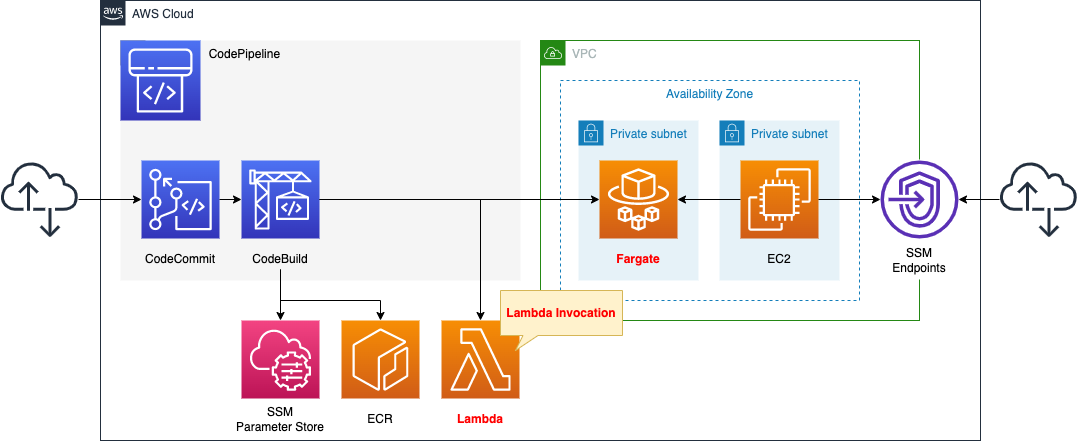

We will configure CodePipeline to link three resources.

The first is CodeCommit.

CodeCommit is responsible for the source stage of CodePipeline.

It is used as a Git repository.

The second is CodeBuild.

CodeBuild is in charge of the build stage of CodePipeline.

It builds a Docker image from code pushed to CodeCommit.

The built image is pushed to ECR.

The third is the Lambda function.

The function’s job is to change the desired number of tasks for the ECS (Fargate) service.

Specifically, it changes the desired number from 0 to 1.

Create a deploy stage in CodePipeline.

Configure it to deploy to Fargate as described below.

Save your DockerHub account information in the SSM parameter store.

These will be used to pull the base image when generating images with DockerBuild, after signing in to DockerHub.

The trigger for CodePipeline to be started is conditional on a push to CodeCommit.

Specifically, we will have a rule in EventBridge that satisfies the above.

Create a Fargate type ECS on a private subnet.

Create an EC2 instance.

Use it as a client to access containers created on Fargate.

CloudFormation template files

Build the above configuration with CloudFormation.

The CloudFormation templates are located at the following URL

https://github.com/awstut-an-r/awstut-fa/tree/main/079

Explanation of key points of the template files

This page focuses on how to call Lambda functions within CodePipeline.

For basic information about CodePipeline, please refer to the following page

For information on how to create a deployment stage in CodePipeline and deploy to ECS (Fargate), please refer to the following page

For information on how to build Fargate on a private subnet, please refer to the following page

Use CloudFormation custom resources to automatically delete objects in S3 buckets and images in ECR repositories when deleting the CloudFormation stack.

For more information, please see the following page

Explanation of key points of template files

CodePipeline

Resources:

Pipeline:

Type: AWS::CodePipeline::Pipeline

Properties:

ArtifactStore:

Location: !Ref BucketName

Type: S3

Name: !Ref Prefix

RoleArn: !GetAtt CodePipelineRole.Arn

Stages:

- Actions:

- ActionTypeId:

Category: Source

Owner: AWS

Provider: CodeCommit

Version: 1

Configuration:

BranchName: !Ref BranchName

OutputArtifactFormat: CODE_ZIP

PollForSourceChanges: false

RepositoryName: !GetAtt CodeCommitRepository.Name

Name: SourceAction

OutputArtifacts:

- Name: !Ref PipelineSourceArtifact

Region: !Ref AWS::Region

RunOrder: 1

Name: Source

- Actions:

- ActionTypeId:

Category: Build

Owner: AWS

Provider: CodeBuild

Version: 1

Configuration:

ProjectName: !Ref CodeBuildProject

InputArtifacts:

- Name: !Ref PipelineSourceArtifact

Name: Build

OutputArtifacts:

- Name: !Ref PipelineBuildArtifact

Region: !Ref AWS::Region

RunOrder: 1

Name: Build

- Actions:

- ActionTypeId:

Category: Deploy

Owner: AWS

Provider: ECS

Version: 1

Configuration:

ClusterName: !Ref ECSClusterName

FileName: !Ref ImageDefinitionFileName

ServiceName: !Ref ECSServiceName

InputArtifacts:

- Name: !Ref PipelineBuildArtifact

Name: Deploy

Region: !Ref AWS::Region

RunOrder: 1

Name: Deploy

- Actions:

- ActionTypeId:

Category: Invoke

Owner: AWS

Provider: Lambda

Version: 1

Configuration:

FunctionName: !Ref ECSFunctionName

InputArtifacts: []

Name: Invoke

OutputArtifacts: []

Region: !Ref AWS::Region

RunOrder: 1

Name: Invoke

Code language: YAML (yaml)Define the stages for invoking Lambda functions in the Stages property.

Set the function to be invoked in the Configuration property. Specifically, specify the name of the function to be invoked in the FunctionName property.

Do not set valid artifacts in the InputArtifacts and OutputArtifacts properties. This is because the function to be called this time is to adjust the desired number of ECS tasks, so no reading or writing of artifacts will occur.

Here are the IAM roles for CodePipeline

Resources:

CodePipelineRole:

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Principal:

Service:

- codepipeline.amazonaws.com

Action:

- sts:AssumeRole

Policies:

- PolicyName: PipelinePolicy

PolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Action:

- lambda:invokeFunction

Resource:

- !Sub "arn:aws:lambda:${AWS::Region}:${AWS::AccountId}:function:${ECSFunctionName}"

- Effect: Allow

Action:

- codecommit:CancelUploadArchive

- codecommit:GetBranch

- codecommit:GetCommit

- codecommit:GetRepository

- codecommit:GetUploadArchiveStatus

- codecommit:UploadArchive

Resource:

- !GetAtt CodeCommitRepository.Arn

- Effect: Allow

Action:

- codebuild:BatchGetBuilds

- codebuild:StartBuild

- codebuild:BatchGetBuildBatches

- codebuild:StartBuildBatch

Resource:

- !GetAtt CodeBuildProject.Arn

- Effect: Allow

Action:

- s3:PutObject

- s3:GetObject

- s3:GetObjectVersion

- s3:GetBucketAcl

- s3:GetBucketLocation

Resource:

- !Sub "arn:aws:s3:::${BucketName}"

- !Sub "arn:aws:s3:::${BucketName}/*"

- Effect: Allow

Action:

- ecs:*

Resource: "*"

- Effect: Allow

Action:

- iam:PassRole

Resource: "*"

Condition:

StringLike:

iam:PassedToService:

- ecs-tasks.amazonaws.com

Code language: YAML (yaml)Set permissions to call Lambda functions.

Lambda Function

Resources:

ECSFunction:

Type: AWS::Lambda::Function

Properties:

Code:

ZipFile: |

import boto3

import os

cluster_name = os.environ['CLUSTER_NAME']

count = int(os.environ['COUNT'])

service_name = os.environ['SERVICE_NAME']

ecs_client = boto3.client('ecs')

codepipeline_client = boto3.client('codepipeline')

def lambda_handler(event, context):

job_id = event['CodePipeline.job']['id']

try:

describe_services_response = ecs_client.describe_services(

cluster=cluster_name,

services=[

service_name

]

)

print(describe_services_response)

if describe_services_response['services'][0]['desiredCount'] > 0:

codepipeline_client.put_job_success_result(

jobId=job_id

)

return

update_service_response = ecs_client.update_service(

cluster=cluster_name,

service=service_name,

desiredCount=count

)

print(update_service_response)

codepipeline_client.put_job_success_result(

jobId=job_id

)

except Exception as e:

print(e)

codepipeline_client.put_job_failure_result(

jobId=job_id,

failureDetails={

'type': 'JobFailed',

'message': 'Something happened.'

}

)

Environment:

Variables:

CLUSTER_NAME: !Ref ECSClusterName

COUNT: 1

SERVICE_NAME: !Ref ECSServiceName

FunctionName: !Sub "${Prefix}-function-ecs"

Handler: !Ref Handler

Runtime: !Ref Runtime

Role: !GetAtt ECSFunctionRole.Arn

Code language: YAML (yaml)Define the code to be executed by the Lambda function in inline notation.

For more information, please refer to the following page

The Environment property allows you to define environment variables that can be passed to the function.

Specifically, the ECS cluster and service for which the number of ECS tasks will be adjusted, and the adjusted value.

The code is as follows

- Use the describe_services method to get the status of the ECS services and check the desired number.

- Check the number of tasks, and if the desired number is 0, continue the process.

- Use the update_service method to change the desired number of ECS services.

- Call the API for CodePipeline.

The last API is the key point.

The official AWS page mentions the following

As part of the implementation of the Lambda function, there must be a call to either the PutJobSuccessResult API or PutJobFailureResult API. Otherwise, the execution of this action hangs until the action times out.

AWS Lambda

This means that after changing the number of requests, one of the above two APIs must be executed.

In this case, we will execute the PutJobSuccessResult API (put_job_success_result method) if the desired number of jobs was successfully changed, and the PutJobFailureResult API (put_job_failure_ result) if any error occurs during processing.

(Reference) Application Container

Dockerfile

FROM amazonlinux

RUN yum update -y && yum install python3 python3-pip -y

RUN pip3 install bottle

COPY main.py ./

CMD ["python3", "main.py"]

EXPOSE 8080

Code language: Dockerfile (dockerfile)The image for the app container will be based on Amazon Linux 2.

We will use Bottle, a Python web framework.

So after installing Python and pip, install this.

Copy the Python script (main.py) describing the app logic and set this to run.

As mentioned earlier, the app listens for HTTP requests on 8080/tcp, so expose this port.

main.py

from bottle import route, run

@route('/')

def hello():

return 'Hello CodePipeline.'

if __name__ == '__main__':

run(host='0.0.0.0', port=8080)

Code language: Python (python)We will use Bottle to build a simple web server.

The simple configuration is to listen for HTTP requests at 8080/tcp and return “Hello CodePipeline”.

Architecting

Use CloudFormation to build this environment and check the actual behavior.

Create CloudFormation stacks and check resources in stacks

Create a CloudFormation stack.

For information on how to create stacks and check each stack, please refer to the following page

After checking the resources in each stack, information on the main resources created this time is as follows

- ECR: fa-079

- CodeCommit: fa-079

- CodeBuild: fa-079

- CodePipeline: fa-079

- Lambda function: fa-079-function-ecs

Check the created resources from the AWS Management Console.

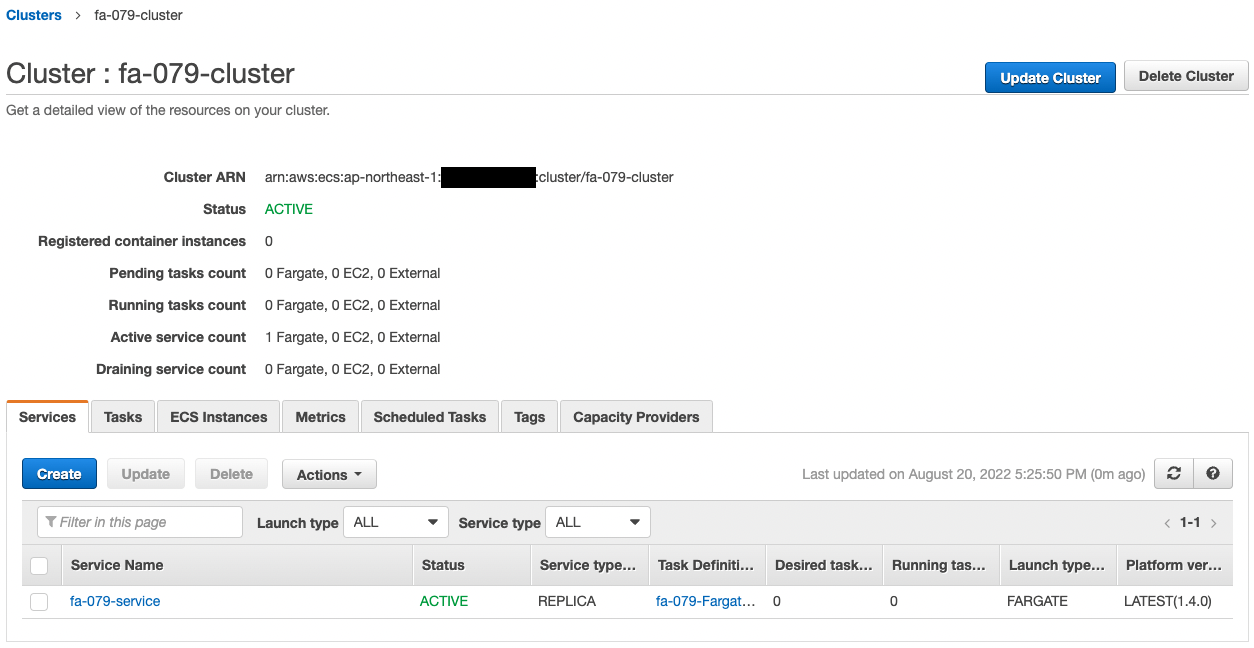

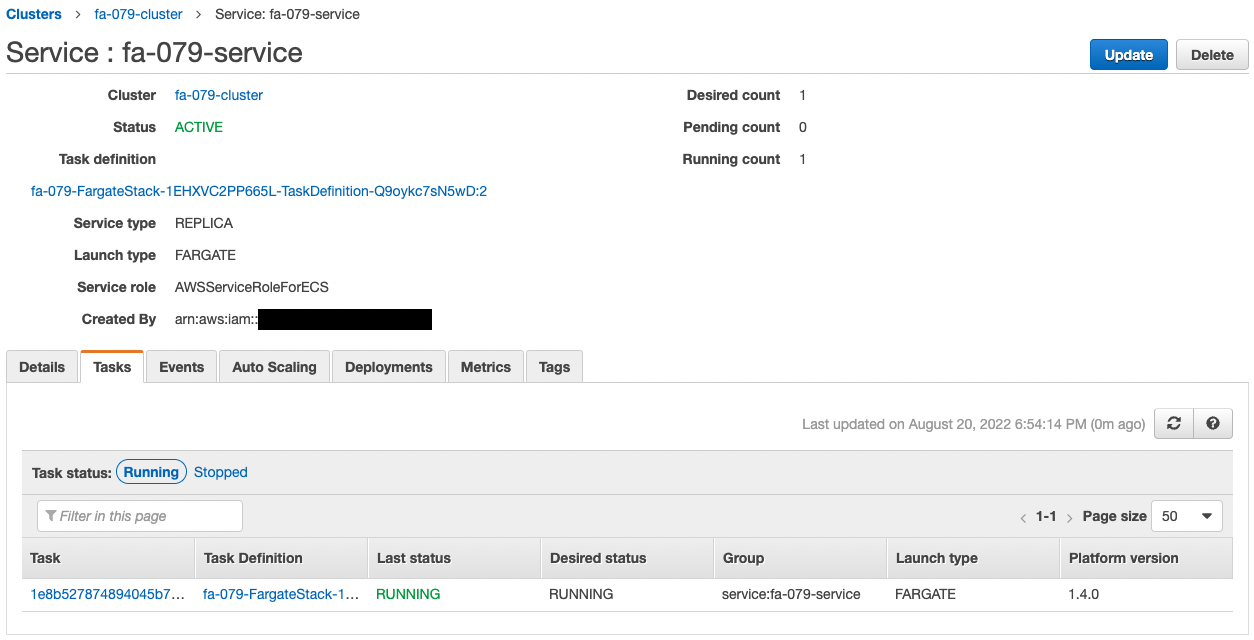

Check the ECS (Fargate) cluster service.

The cluster service has been successfully created.

The point is that the desired number of tasks is 0.

The point is that the number of desired tasks is zero, which means that during the initial Fargate build, not a single task is launched.

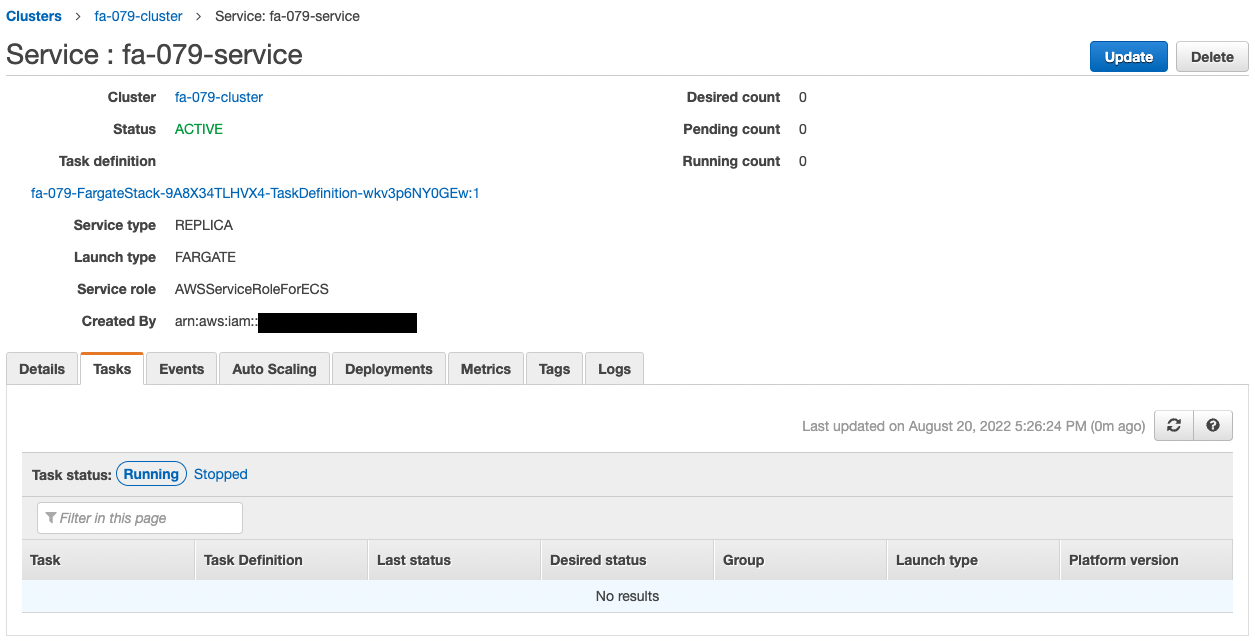

Check CodePipeline.

The pipeline is failing to execute.

This is because the pipeline was triggered by the creation of CodeCommit when the CloudFormation stack was created.

Since we are not pushing code to CodeCommit at this time, an error occurred during the pipeline execution process.

Note the stage that is being created.

The stage named Invoke, which calls the Lambda function, is prepared at the end of the pipeline.

This means that the code pushed by CodeCommit will be used to build a Docker image, deploy the image to Fargate, and then change the desired number of Fargate tasks.

Check Action

Now that we are ready, we push the code to CodeCommit.

First, pull CodeCommit.

$ git clone https://git-codecommit.ap-northeast-1.amazonaws.com/v1/repos/fa-079

Cloning into 'fa-079'...

warning: You appear to have cloned an empty repository.

Code language: Bash (bash)An empty repository has been pulled.

Add the Dockerfile and main.py to the repository.

$ ls -al

total 8

drwxrwxr-x 3 ec2-user ec2-user 51 Aug 20 08:31 .

drwxrwxr-x 3 ec2-user ec2-user 20 Aug 20 08:31 ..

-rw-rw-r-- 1 ec2-user ec2-user 187 Aug 12 11:01 Dockerfile

drwxrwxr-x 7 ec2-user ec2-user 119 Aug 20 08:31 .git

-rw-rw-r-- 1 ec2-user ec2-user 681 Aug 20 02:57 main.py

Code language: Bash (bash)Push the two files to CodeCommit.

$ git add .

$ git commit -m "first commit"

[master (root-commit) 7e41437] first commit

...

2 files changed, 39 insertions(+)

create mode 100644 Dockerfile

create mode 100644 main.py

$ git push

...

* [new branch] master -> master

Code language: Bash (bash)The push was successful.

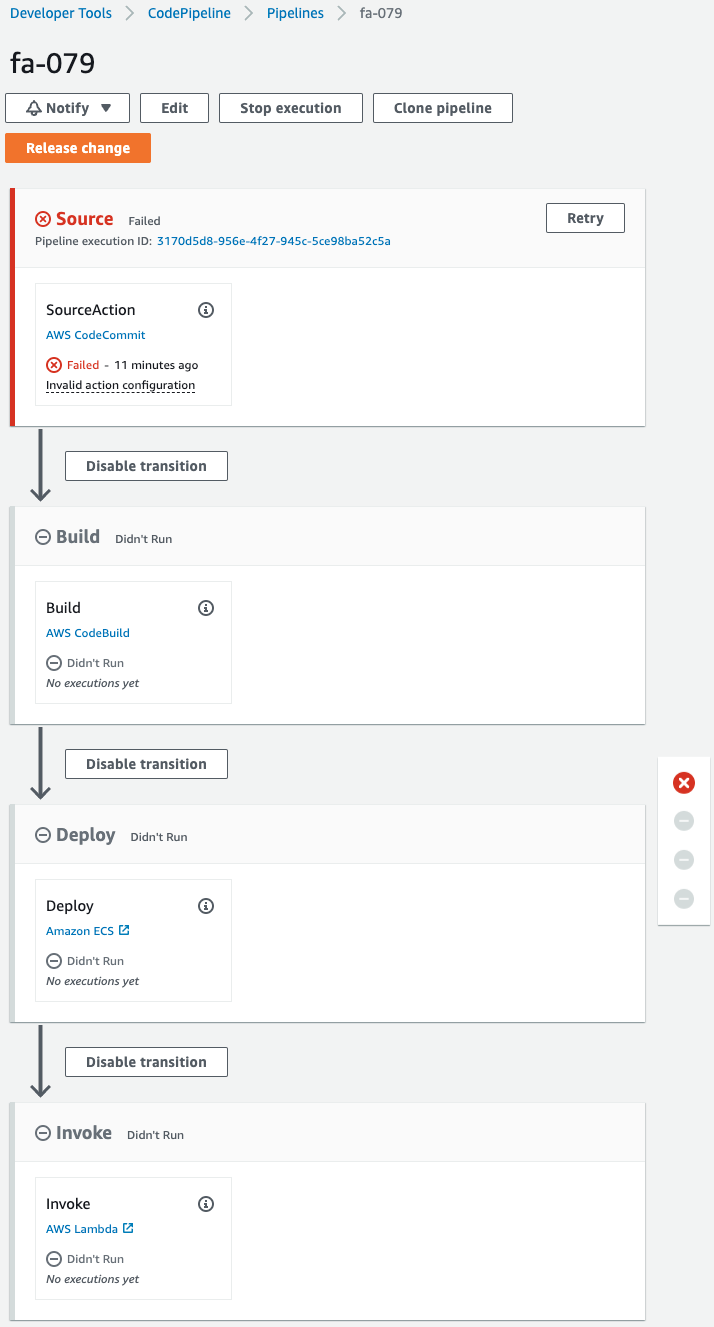

After waiting for a while, check CodePipeline again.

The pipeline has completed successfully.

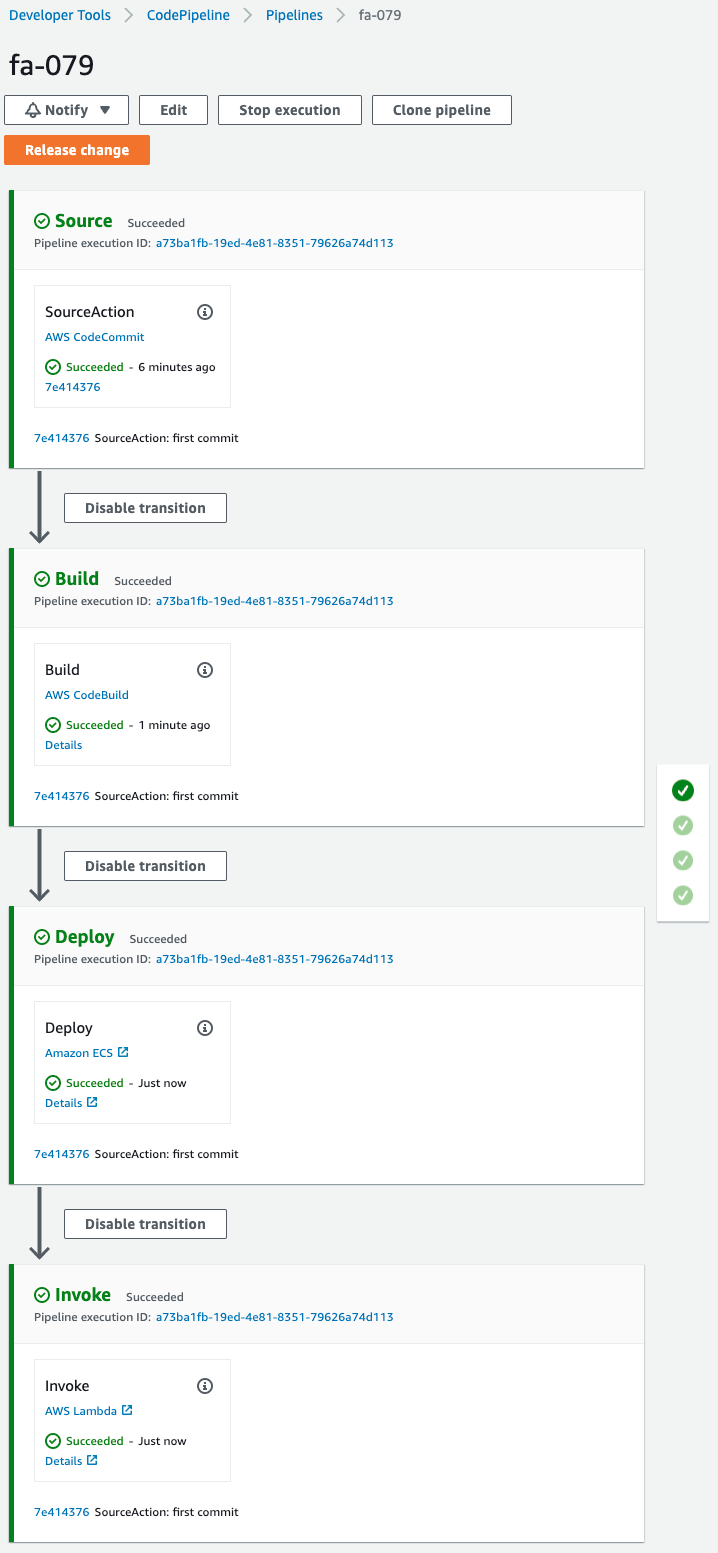

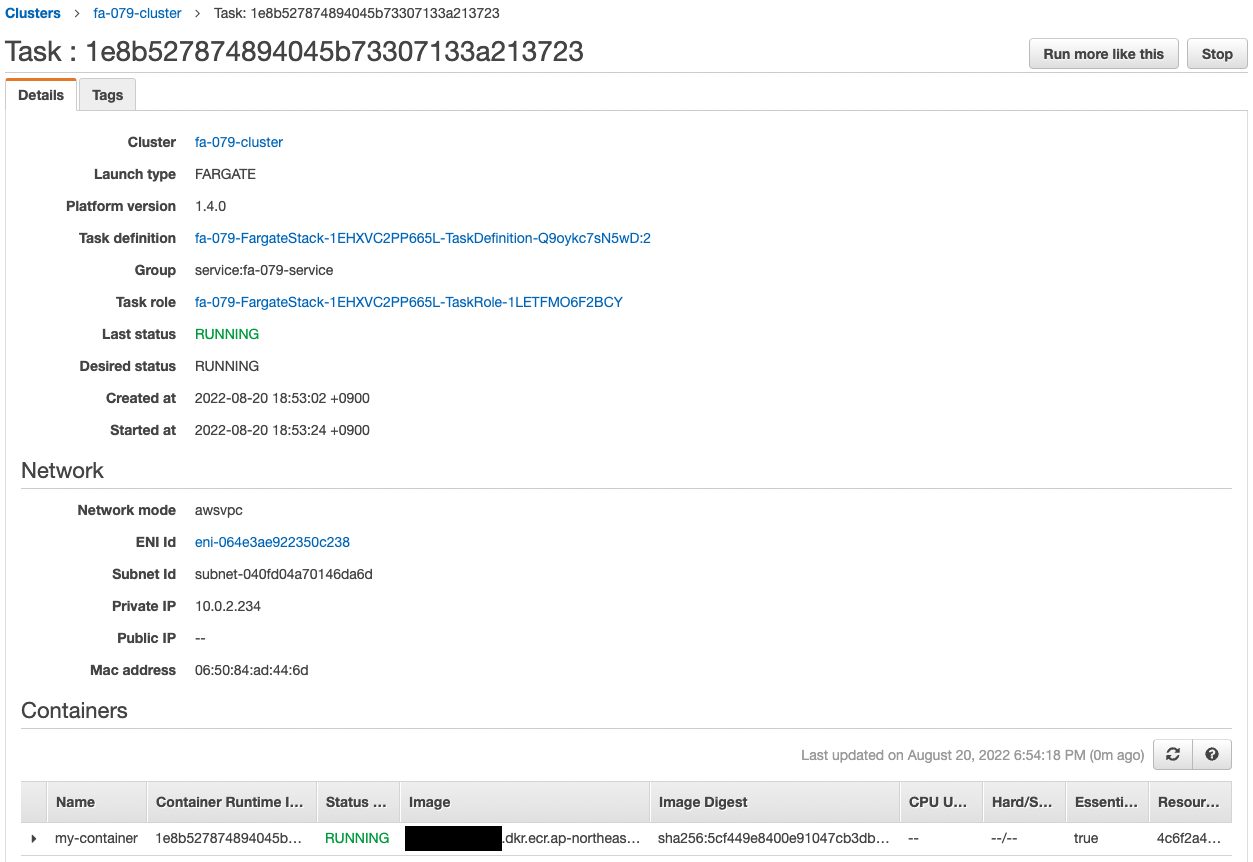

Check Fargate.

The desired number is now 1.

This means that the Lambda function has been invoked and the desired number has been changed.

Since the desired number is now 1, an ECS task is automatically generated.

Looking at the details of this task, we can see that the assigned private address is “10.0.2.234”.

It accesses the EC2 instance in order to make an HTTP request to the container.

Use SSM Session Manager to access the instance.

% aws ssm start-session --target i-0c41c5926230b480c

Starting session with SessionId: root-0c76e08548a26fec6

sh-4.2$

Code language: Bash (bash)For more information on SSM Session Manager, please refer to the following page

Use the curl command to access the container in the task.

sh-4.2$ curl http://10.0.2.224:8080/

Hello CodePipeline.

Code language: Bash (bash)The container responded.

It is indeed the string we set up in the Bottle app.

This shows that the number of ECS tasks can be changed by calling the Lambda function within CodePipeline.

Summary

We were able to change the desired number of ECS tasks in the ECS (Fargate) service by invoking a Lambda function in the pipeline.